【PyTorch】教程:Transfer learning

| 阿里云国内75折 回扣 微信号:monov8 |

| 阿里云国际,腾讯云国际,低至75折。AWS 93折 免费开户实名账号 代冲值 优惠多多 微信号:monov8 飞机:@monov6 |

Transfer learning

实际工作中只有很少的人从头开始训练 CNN因为很难获得大量的样本。一般情况下会通过调用预训练模型例如

ConvNet在ImageNet1.2 M图像1000个类别,可以用ConvNet初始化也可以作为特征提取器 用于感兴趣的任务或领域。

有两种主要的迁移学习:

- 微调

Convnet: 代替随机初始化用预训练的网络模型进行初始化就像在ImageNet1000的数据集上训练剩下的训练就很常见了。 ConvNet作为固定的特征提取器: 冻结网络的权重除了最后的全连接层最后的全连接层被替换新的随机权重然后只训练这一层。

加载数据

我们利用 torchvision 和 torch.utils.data 包加载数据。

今天的任务是训练 ants 和 bees 分类器模型每个类别 120 张训练图75 张图验证图

Finetune

from __future__ import print_function, division

import torch

import torch.nn as nn

import torch.optim as optim

from torch.optim import lr_scheduler

import torch.backends.cudnn as cudnn

import numpy as np

import torchvision

from torchvision import datasets, models, transforms

import matplotlib.pyplot as plt

import time

import os

import copy

cudnn.benchmark = True

plt.ion() # interactive mode

# 对训练数据进行增强和归一化

# 对验证数据做归一化

data_transforms = {

'train': transforms.Compose([

transforms.RandomResizedCrop(224),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225]),

]),

'val': transforms.Compose([

transforms.Resize(256),

transforms.CenterCrop(224),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225]),

]),

}

# dataset and dataloader

data_dir = "../../../datasets/hymenoptera_data"

image_datasets = {

x: datasets.ImageFolder(os.path.join(data_dir, x), data_transforms[x])

for x in ['train', 'val']}

dataloaders = {

x: torch.utils.data.DataLoader(image_datasets[x], batch_size=4, shuffle=True,

num_workers=4)

for x in ['train', 'val']}

dataset_sizes = {x: len(image_datasets[x]) for x in ['train', 'val']}

class_names = image_datasets['train'].classes

device = torch.device(

"cuda") if torch.cuda.is_available() else torch.device("cpu")

# Visualize a few images

# Let’s visualize a few training images so as to understand the data augmentations.

#

def imshow(inp, title=None):

inp = inp.numpy().transpose((1, 2, 0))

mean = np.array([0.485, 0.456, 0.406])

std = np.array([0.229, 0.224, 0.225])

inp = std * inp + mean

inp = np.clip(inp, 0, 1)

plt.imshow(inp)

if title is not None:

plt.title(title)

plt.pause(0.001)

# inputs, classes = next(iter(dataloaders['train']))

# # make a grid from batch

# out = torchvision.utils.make_grid((inputs))

# imshow(out, title=[class_names[x] for x in classes])

# training

def train_model(model, criterion, optimizer, scheduler, num_epochs=25):

since = time.time()

best_model_wts = copy.deepcopy(model.state_dict())

best_acc = 0.0

for epoch in range(num_epochs):

print('Epoch {}/{}'.format(epoch, num_epochs-1))

print('-' * 10)

# Each epoch has a training and validation phase

for phase in ['train', 'val']:

if phase == 'train':

model.train() # Set model to training mode

else:

model.eval() # Set model to evaluate mode

running_loss = 0.0

running_corrects = 0

# Iterate over data.

for inputs, labels in dataloaders[phase]:

inputs = inputs.to(device)

labels = labels.to(device)

# zero the parameter gradients

optimizer.zero_grad()

# forward

# track history if only in train

with torch.set_grad_enabled(phase == 'train'):

outputs = model(inputs)

_, preds = torch.max(outputs, 1)

loss = criterion(outputs, labels)

# backward + optimize only if in training phase

if phase == 'train':

loss.backward()

optimizer.step()

# statisitc

running_loss += loss.item() * inputs.size(0)

running_corrects += torch.sum(preds == labels.data)

if phase == 'train':

scheduler.step()

epoch_loss = running_loss / dataset_sizes[phase]

epoch_acc = running_corrects.double() / dataset_sizes[phase]

print(f'{phase} Loss: {epoch_loss:.4f} Acc: {epoch_acc:.4f}')

# deep copy the model

if phase == 'val' and epoch_acc > best_acc:

best_acc = epoch_acc

best_model_wts = copy.deepcopy(model.state_dict())

print()

time_elapsed = time.time() - since

print(

f'Traing complete in {time_elapsed // 60:.0f}m {time_elapsed % 60:.0f} s')

print(f'Best val Acc: {best_acc:.4f}')

# load best model weights

model.load_state_dict(best_model_wts)

return model

# Generic function to display predictions for a few images

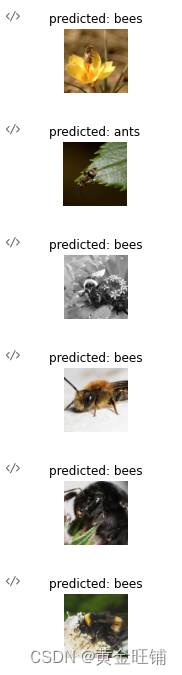

def visualize_model(model, num_images=6):

was_training = model.training

model.eval()

images_so_far = 0

fig = plt.figure()

with torch.no_grad():

for i, (inputs, labels) in enumerate(dataloaders['val']):

inputs = inputs.to(device)

labels = labels.to(device)

outputs = model(inputs)

_, preds = torch.max(outputs, 1)

for j in range(inputs.size()[0]):

images_so_far += 1

ax = plt.subplot(num_images // 2, 2, images_so_far)

ax.axis('off')

ax.set_title(f'predicted: {class_names[preds[j]]}')

imshow(inputs.cpu().data[j])

if images_so_far == num_images:

model.train(mode=was_training)

return

model.train(model=was_training)

# Load a pretrained model and reset final fully connected layer.

model_finetune = models.resnet18(pretrained=True)

num_ftrs = model_finetune.fc.in_features

# here the size of each output sample is set to 2

# it can be generalized to nn.Linear

model_finetune.fc = nn.Linear(num_ftrs, 2)

model_finetune = model_finetune.to(device)

criterion = nn.CrossEntropyLoss()

# Observe that all parameters are being optimized

optimizer_finetune = optim.SGD(

model_finetune.parameters(), lr=0.001, momentum=0.9)

# Decay LR by a factor of 0.1 every 7 epochs

exp_lr_scheduler = lr_scheduler.StepLR(

optimizer_finetune, step_size=7, gamma=0.1)

# train and evaluate

model_finetune = train_model(model=model_finetune,

criterion=criterion, optimizer=optimizer_finetune,

scheduler=exp_lr_scheduler, num_epochs=25)

# 可视化模型效果

visualize_model(model_finetune)

Output exceeds the size limit. Open the full output data in a text editor

train Loss: 0.6425 Acc: 0.6230

val Loss: 0.2763 Acc: 0.9150

Epoch 1/24

----------

train Loss: 0.4966 Acc: 0.8074

val Loss: 0.3128 Acc: 0.8693

Epoch 2/24

----------

train Loss: 0.4395 Acc: 0.8402

val Loss: 0.2923 Acc: 0.8562

Epoch 3/24

----------

train Loss: 0.3743 Acc: 0.8402

val Loss: 0.2049 Acc: 0.9281

Epoch 4/24

----------

train Loss: 0.2655 Acc: 0.8852

val Loss: 0.1841 Acc: 0.9346

Epoch 5/24

----------

...

val Loss: 0.1862 Acc: 0.9412

Traing complete in 1m 0 s

Best val Acc: 0.9477

固定为特征提取器

现在我们来冻结网络除了最后一层。我们需要设置 requires_grad = False 来冻结参数这样的话就不会计算梯度。查看更多

关键代码

# set requires_grad = False

for param in model_conv.parameters():

param.requires_grad = False

from __future__ import print_function, division

import torch

import torch.nn as nn

import torch.optim as optim

from torch.optim import lr_scheduler

import torch.backends.cudnn as cudnn

import numpy as np

import torchvision

from torchvision import datasets, models, transforms

import matplotlib.pyplot as plt

import time

import os

import copy

cudnn.benchmark = True

plt.ion() # interactive mode

# 对训练数据进行增强和归一化

# 对验证数据做归一化

data_transforms = {

'train': transforms.Compose([

transforms.RandomResizedCrop(224),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225]),

]),

'val': transforms.Compose([

transforms.Resize(256),

transforms.CenterCrop(224),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225]),

]),

}

# dataset and dataloader

data_dir = "../../../datasets/hymenoptera_data"

image_datasets = {

x: datasets.ImageFolder(os.path.join(data_dir, x), data_transforms[x])

for x in ['train', 'val']}

dataloaders = {

x: torch.utils.data.DataLoader(image_datasets[x], batch_size=4, shuffle=True,

num_workers=4)

for x in ['train', 'val']}

dataset_sizes = {x: len(image_datasets[x]) for x in ['train', 'val']}

class_names = image_datasets['train'].classes

device = torch.device(

"cuda") if torch.cuda.is_available() else torch.device("cpu")

# Visualize a few images

# Let’s visualize a few training images so as to understand the data augmentations.

#

def imshow(inp, title=None):

inp = inp.numpy().transpose((1, 2, 0))

mean = np.array([0.485, 0.456, 0.406])

std = np.array([0.229, 0.224, 0.225])

inp = std * inp + mean

inp = np.clip(inp, 0, 1)

plt.imshow(inp)

if title is not None:

plt.title(title)

plt.pause(0.001)

# inputs, classes = next(iter(dataloaders['train']))

# # make a grid from batch

# out = torchvision.utils.make_grid((inputs))

# imshow(out, title=[class_names[x] for x in classes])

# training

def train_model(model, criterion, optimizer, scheduler, num_epochs=25):

since = time.time()

best_model_wts = copy.deepcopy(model.state_dict())

best_acc = 0.0

for epoch in range(num_epochs):

print('Epoch {}/{}'.format(epoch, num_epochs-1))

print('-' * 10)

# Each epoch has a training and validation phase

for phase in ['train', 'val']:

if phase == 'train':

model.train() # Set model to training mode

else:

model.eval() # Set model to evaluate mode

running_loss = 0.0

running_corrects = 0

# Iterate over data.

for inputs, labels in dataloaders[phase]:

inputs = inputs.to(device)

labels = labels.to(device)

# zero the parameter gradients

optimizer.zero_grad()

# forward

# track history if only in train

with torch.set_grad_enabled(phase == 'train'):

outputs = model(inputs)

_, preds = torch.max(outputs, 1)

loss = criterion(outputs, labels)

# backward + optimize only if in training phase

if phase == 'train':

loss.backward()

optimizer.step()

# statisitc

running_loss += loss.item() * inputs.size(0)

running_corrects += torch.sum(preds == labels.data)

if phase == 'train':

scheduler.step()

epoch_loss = running_loss / dataset_sizes[phase]

epoch_acc = running_corrects.double() / dataset_sizes[phase]

print(f'{phase} Loss: {epoch_loss:.4f} Acc: {epoch_acc:.4f}')

# deep copy the model

if phase == 'val' and epoch_acc > best_acc:

best_acc = epoch_acc

best_model_wts = copy.deepcopy(model.state_dict())

print()

time_elapsed = time.time() - since

print(

f'Traing complete in {time_elapsed // 60:.0f}m {time_elapsed % 60:.0f} s')

print(f'Best val Acc: {best_acc:.4f}')

# load best model weights

model.load_state_dict(best_model_wts)

return model

# Generic function to display predictions for a few images

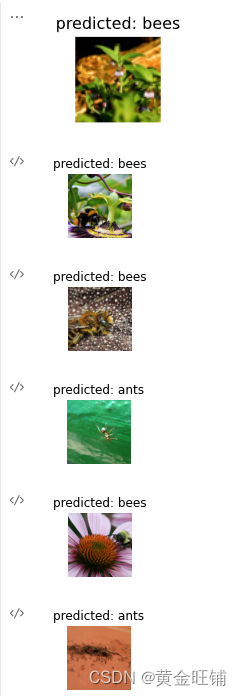

def visualize_model(model, num_images=6):

was_training = model.training

model.eval()

images_so_far = 0

fig = plt.figure()

with torch.no_grad():

for i, (inputs, labels) in enumerate(dataloaders['val']):

inputs = inputs.to(device)

labels = labels.to(device)

outputs = model(inputs)

_, preds = torch.max(outputs, 1)

for j in range(inputs.size()[0]):

images_so_far += 1

ax = plt.subplot(num_images // 2, 2, images_so_far)

ax.axis('off')

ax.set_title(f'predicted: {class_names[preds[j]]}')

imshow(inputs.cpu().data[j])

if images_so_far == num_images:

model.train(mode=was_training)

return

model.train(model=was_training)

### 不同的地方

# Load a pretrained model and reset final fully connected layer.

# model_finetune = models.resnet18(pretrained=True)

# num_ftrs = model_finetune.fc.in_features

model_conv = models.resnet18(pretrained=True)

# set requires_grad = False

for param in model_conv.parameters():

param.requires_grad = False

# 新的结构模块默认 requires_grad=True

num_ftrs = model_conv.fc.in_features

# here the size of each output sample is set to 2

# it can be generalized to nn.Linear

model_conv.fc = nn.Linear(num_ftrs, 2)

model_conv = model_conv.to(device)

criterion = nn.CrossEntropyLoss()

# Observe that all parameters are being optimized

optimizer_finetune = optim.SGD(

model_conv.parameters(), lr=0.001, momentum=0.9)

# Decay LR by a factor of 0.1 every 7 epochs

exp_lr_scheduler = lr_scheduler.StepLR(

optimizer_finetune, step_size=7, gamma=0.1)

# train and evaluate

model_conv = train_model(model=model_conv,

criterion=criterion, optimizer=optimizer_finetune,

scheduler=exp_lr_scheduler, num_epochs=25)

visualize_model(model_conv)

Output exceeds the size limit. Open the full output data in a text editor

Epoch 0/24

----------

train Loss: 0.6819 Acc: 0.6270

val Loss: 0.1885 Acc: 0.9477

Epoch 1/24

----------

train Loss: 0.4212 Acc: 0.7992

val Loss: 0.1760 Acc: 0.9477

Epoch 2/24

----------

train Loss: 0.5423 Acc: 0.7828

val Loss: 0.7761 Acc: 0.7059

Epoch 3/24

----------

train Loss: 0.6406 Acc: 0.7377

val Loss: 0.2656 Acc: 0.9216

Epoch 4/24

----------

train Loss: 0.5401 Acc: 0.7951

val Loss: 0.2485 Acc: 0.8954

...

val Loss: 0.1679 Acc: 0.9542

Traing complete in 0m 45 s

Best val Acc: 0.9542