SQL刷题宝典-MySQL速通力扣困难题

| 阿里云国内75折 回扣 微信号:monov8 |

| 阿里云国际,腾讯云国际,低至75折。AWS 93折 免费开户实名账号 代冲值 优惠多多 微信号:monov8 飞机:@monov6 |

📢作者 小小明-代码实体

📢博客主页https://blog.csdn.net/as604049322

📢欢迎点赞 👍 收藏 ⭐留言 📝 欢迎讨论

📢本文链接https://xxmdmst.blog.csdn.net/article/details/128509713

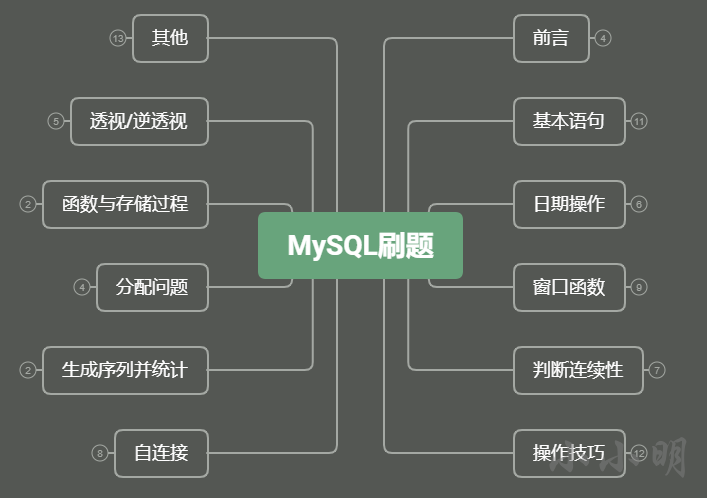

本手册目录

文章目录

前言

本人写SQL断断续续也有5年多了对于刷题这种事情一直都是非常不屑的态度“写SQL这么简单的事情也需要刷不是看一眼就会了吗”

直到我最近我真的刷了力扣的SQL题才发现其实还是有太多不熟悉的技巧。最近花了近一个多月的时间刷完了LeetCode上220道SQL数据库的题感觉收获还是很多下面在二刷后整理了本手册。

本手册主干

力扣刷题地址https://leetcode.cn/problemset/database/

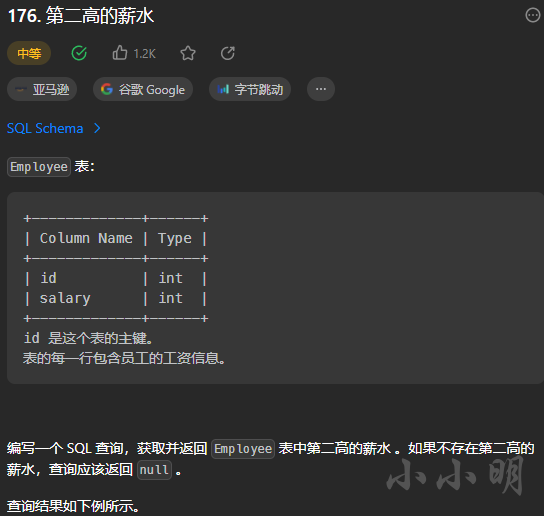

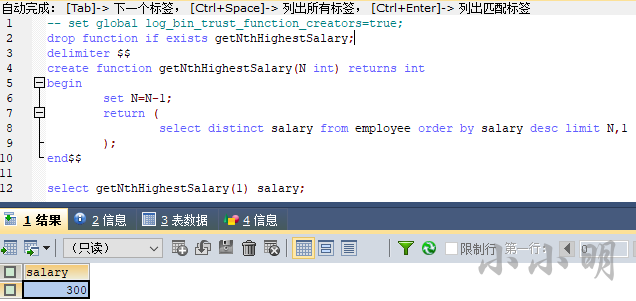

以《176. 第二高的薪水》为例看看题目格式

Markdown导入数据库python脚本

力扣的SQL绝大部分会员可见为了保证各题的数据能够很方便的导入本地数据库我编写了一个Python脚本以上述题目为例代码如下

from urllib.parse import quote_plus

import pandas as pd

import re

from sqlalchemy import create_engine

from sqlalchemy.types import *

from sqlalchemy import types

def md2sql(sql_text, type_md, tbname, db_config):

host = db_config["host"]

database = db_config["database"]

user_name = db_config["user_name"]

password = quote_plus(db_config["password"])

port = db_config["port"]

engine = create_engine(

f'mysql+pymysql://{user_name}:{password}@{host}:{port}/{database}')

dtypes = {}

if type_md and type_md.strip():

type_txt = " ".join(dir(types))

lines = type_md.strip().splitlines()

for line in lines:

if "---" in line or "Column Name" in line:

continue

k, v = re.split(" *\| *", line.strip(" |"), maxsplit=1)

a, b = re.split("(?=\(|$)", v, 1)

dtypes[k.lower()] = eval(re.search(a, type_txt, re.I).group(0) + b)

lines = [line for line in sql_text.strip().splitlines() if "---" not in line]

header = [c.lower() for c in re.split(" *\| *", lines[0])[1:-1]]

data = []

for line in lines[1:]:

row = [None if e.lower() == "null" else e

for e in re.split(" *\| *", line.strip(" |"))]

data.append(row)

df = pd.DataFrame(data, columns=header)

with engine.connect() as conn:

print(tbname)

df.to_sql(name=tbname.lower(), con=conn, if_exists='replace', index=False,

dtype=dtypes)

table = pd.read_sql_table(tbname.lower(), conn)

return table

db_config = {

"host": "localhost",

"database": "leetcode",

"user_name": "root",

"password": '123456',

"port": 3306

}

type_md = """

+-------------+------+

| Column Name | Type |

+-------------+------+

| id | int |

| salary | int |

+-------------+------+

"""

sql_text = """

+----+--------+

| id | salary |

+----+--------+

| 1 | 100 |

| 2 | 200 |

| 3 | 300 |

+----+--------+

"""

df = md2sql(sql_text, type_md, "Employee", db_config)

print(df)

将上述脚本保存为md2sql.py。

根据自己本地数据库的实际情况修改参数。后面要导入其他表时也只需要修改前3个参数。

导入上述数据后测试一下如下SQL语句

select (select distinct salary from employee order by salary desc limit 1,1) SecondHighestSalary;

SecondHighestSalary

---------------------

200

顺利通过。

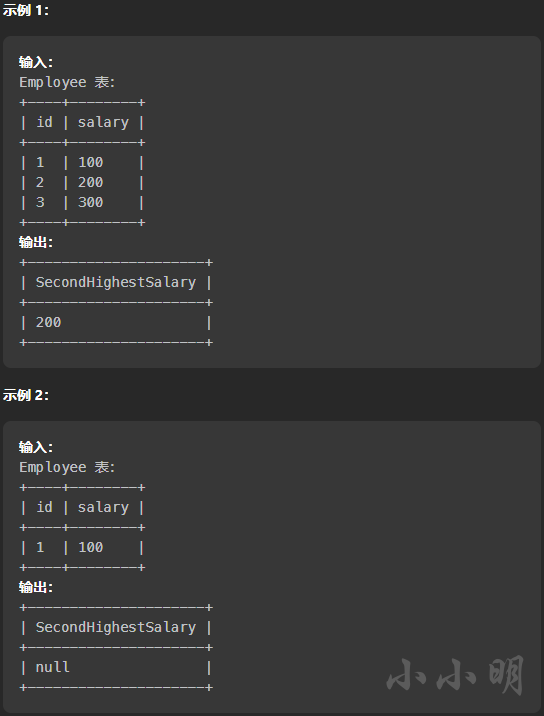

SQL Schema批量导入

此外LeetCode还提供了SQL Schema导入语句

只不过这些语句没有;结尾无法直接批量执行但是我们依然可以使用python脚本批量逐条执行

from sqlalchemy import create_engine

from urllib.parse import quote_plus

host = 'localhost'

database = 'leetcode'

user_name = 'root'

password = '123456'

port = 3306

engine = create_engine(

f'mysql+pymysql://{user_name}:{quote_plus(password)}@{host}:{port}/{database}')

def SQL_Schema_import(sql_txt):

with engine.connect() as conn:

n = 0

for line in sql_txt.strip().splitlines():

result = conn.execute(line.replace("'None'", "null"))

n += result.rowcount

print(f"共插入{n}条数据原有数据已被覆盖")

sql_txt = """

Create table If Not Exists Candidate (id int, name varchar(255))

Create table If Not Exists Vote (id int, candidateId int)

Truncate table Candidate

insert into Candidate (id, name) values ('1', 'A')

insert into Candidate (id, name) values ('2', 'B')

insert into Candidate (id, name) values ('3', 'C')

insert into Candidate (id, name) values ('4', 'D')

insert into Candidate (id, name) values ('5', 'E')

Truncate table Vote

insert into Vote (id, candidateId) values ('1', '2')

insert into Vote (id, candidateId) values ('2', '4')

insert into Vote (id, candidateId) values ('3', '3')

insert into Vote (id, candidateId) values ('4', '2')

insert into Vote (id, candidateId) values ('5', '5')

"""

SQL_Schema_import(sql_txt)

从SQL Schema复制的SQL无法自动修改同名表的Schema若已存在Schema不同的同名表只能手动删除表后再执行上述代码或者自行添加自动删除的代码。

本文个别题使用SQL Schema这种导入形式但由于Markdown形式更清晰所以整体上还是都使用了Markdown的导入形式。

基本配置

若我们直接引用未聚合的字段例如

select

a.id,name,group_concat(b.id) ids

from Candidate a join vote b

on a.id=b.candidateId

group by a.id;

会报出如下错误

Expression #2 of SELECT list is not in GROUP BY clause and contains nonaggregated column ‘leetcode.a.name’ which is not functionally dependent on columns in GROUP BY clause; this is incompatible with sql_mode=only_full_group_by

要直接引用未参与聚合的字段我们可以使用聚合函数

select

a.id,

any_value(name) name,

group_concat(b.id) ids

from Candidate a join vote b

on a.id=b.candidateId

group by a.id;

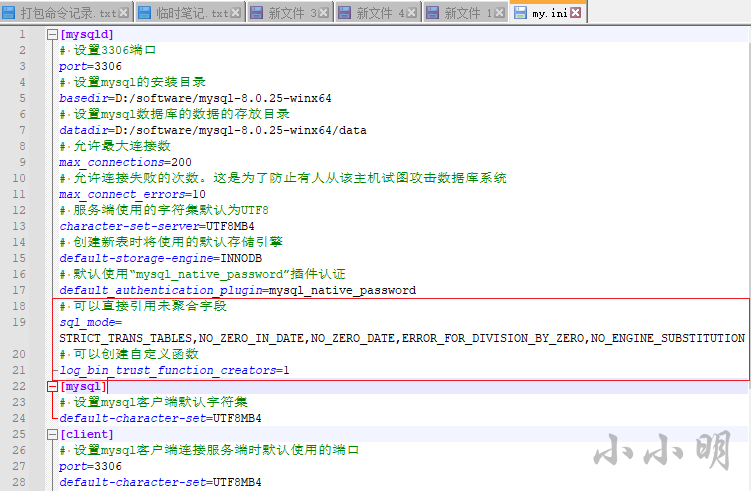

另外就是修改mysql的配置修改my.ini配置文件的 [mysqld] 配置

# 可以直接引用未聚合字段

sql_mode=STRICT_TRANS_TABLES,NO_ZERO_IN_DATE,NO_ZERO_DATE,ERROR_FOR_DIVISION_BY_ZERO,NO_ENGINE_SUBSTITUTION

另外就是我们创建自定义函数时可能会报出如下错误

This function has none of DETERMINISTIC, NO SQL, or READS SQL DATA in its declaration and binary logging is enabled (you *might* want to use the less safe log_bin_trust_function_creators variable)

这时除了临时修改

set global log_bin_trust_function_creators=TRUE;

还可以修改my.ini配置文件的 [mysqld] 配置

# 可以创建自定义函数

log_bin_trust_function_creators=1

重启后即可生效。

参考资料

MySQL语法查询网站https://www.begtut.com/mysql/mysql-tutorial.html

该网站可以查看MySQL按关键字分类的语法

MySQL8.0的安装

本手册全部在MySQL8.0版本测试可以参考以下方法安装

不卸载原有mysql直接安装mysql8.0

https://xxmdmst.blog.csdn.net/article/details/113204880

MySQL视频教程推荐https://www.bilibili.com/video/BV1iq4y1u7vj/

对应的资料下载https://pan.baidu.com/s/1v44IeG8kwqbVrpwAGRPytw?pwd=1234

基本语句

delete删除操作

数据

type_md = """

+-------------+---------+

| Column Name | Type |

+-------------+---------+

| id | int |

| email | varchar(20) |

+-------------+---------+

"""

sql_text = """

+----+------------------+

| id | email |

+----+------------------+

| 1 | john@example.com |

| 2 | bob@example.com |

| 3 | john@example.com |

+----+------------------+

"""

df = md2sql(sql_text, type_md, "Person", db_config)

print(df.to_markdown(index=False))

要求 删除 所有重复的电子邮件只保留一个id最小的唯一电子邮件。

我们需要先查找出重复的且id不是最小的记录

select p1.* from person p1 join person p2

on p1.email=p2.email and p1.id>p2.id

然后将select修改为delete即可

delete p1.* from person p1 join person p2

on p1.email=p2.email and p1.id>p2.id;

不使用自连接的方法

delete from person where id not in(

select * from (select min(id) from person group by email) t

)

需要嵌套一层子查询是因为直接删除会报出如下错误You can't specify target table 'person' for update in FROM clause

update更新操作

数据

type_md = """

| id | int |

| name | varchar(20) |

| sex | ENUM('m','f') |

| salary | int |

"""

sql_text = """

| id | name | sex | salary |

+----+------+-----+--------+

| 1 | A | m | 2500 |

| 2 | B | f | 1500 |

| 3 | C | m | 5500 |

| 4 | D | f | 500 |

"""

df = md2sql(sql_text, type_md, "Salary", db_config)

print(df)

使用 单个 update 语句交换所有的 'f' 和 'm' 即将所有 'f' 变为 'm' 反之亦然

update salary set sex=if(sex="f","m","f");

case when的应用

数据

type_md = """

| name | varchar(5) |

| value | int |

"""

sql_text = """

| name | value |

| ---- | ----- |

| x | 66 |

| y | 77 |

"""

df = md2sql(sql_text, type_md, "Variables", db_config)

print(df)

type_md = """

| left_operand | varchar(5) |

| operator | enum('<','>','=') |

| right_operand | varchar(5) |

"""

sql_text = """

| left_operand | operator | right_operand |

| ------------ | -------- | ------------- |

| x | > | y |

| x | < | y |

| x | = | y |

| y | > | x |

| y | < | x |

| x | = | x |

"""

df = md2sql(sql_text, type_md, "Expressions", db_config)

print(df)

查询表 Expressions 的布尔表达式。

select

e.*,

case when(

case operator

when ">" then l.value>r.value

when "=" then l.value=r.value

when "<" then l.value<r.value

end

) then "true" else "false"

end as value

from expressions e

join variables l on e.left_operand=l.name

join variables r on e.right_operand=r.name;

以上SQL展示了case when的两种写法结果

left_operand operator right_operand value

------------ -------- ------------- --------

x = x true

y < x false

y > x true

x = y false

x < y true

x > y false

union all应用

数据

type_md = """

| player_id | int |

| player_name | varchar(20) |

"""

sql_text = """

| player_id | player_name |

| --------- | ----------- |

| 1 | Nadal |

| 2 | Federer |

| 3 | Novak |

"""

df = md2sql(sql_text, type_md, "Players", db_config)

print(df)

type_md = """

| year | int |

| Wimbledon | int |

| Fr_open | int |

| US_open | int |

| Au_open | int |

"""

sql_text = """

| year | Wimbledon | Fr_open | US_open | Au_open |

| ---- | --------- | ------- | ------- | ------- |

| 2018 | 1 | 1 | 1 | 1 |

| 2019 | 1 | 1 | 2 | 2 |

| 2020 | 2 | 1 | 2 | 2 |

"""

df = md2sql(sql_text, type_md, "Championships", db_config)

print(df)

查询出每一个球员赢得大满贯比赛的次数。结果不包含没有赢得比赛的球员的ID 。

select

a.player_id,player_name,count(1) grand_slams_count

from(

select Wimbledon player_id from Championships

union all

select Fr_open from Championships

union all

select US_open from Championships

union all

select Au_open from Championships

) a join players using(player_id)

group by a.player_id,player_name

player_id player_name grand_slams_count

--------- ----------- -------------------

1 Nadal 7

2 Federer 5

数据

type_md = """

| team_id | int |

| team_name | varchar(20) |

"""

sql_text = """

| team_id | team_name |

| ------- | ----------- |

| 10 | Leetcode FC |

| 20 | NewYork FC |

| 30 | Atlanta FC |

| 40 | Chicago FC |

| 50 | Toronto FC |

"""

df = md2sql(sql_text, type_md, "Teams", db_config)

print(df)

type_md = """

| match_id | int |

| host_team | int |

| guest_team | int |

| host_goals | int |

| guest_goals | int |

"""

sql_text = """

| match_id | host_team | guest_team | host_goals | guest_goals |

| -------- | --------- | ---------- | ---------- | ----------- |

| 1 | 10 | 20 | 3 | 0 |

| 2 | 30 | 10 | 2 | 2 |

| 3 | 10 | 50 | 5 | 1 |

| 4 | 20 | 30 | 1 | 0 |

| 5 | 50 | 30 | 1 | 0 |

"""

df = md2sql(sql_text, type_md, "Matches", db_config)

print(df)

在所有比赛之后计算所有球队的比分。积分奖励方式如下:

- 如果球队赢了比赛(即比对手进更多的球)就得 3 分。

- 如果双方打成平手(即与对方得分相同)则得 1 分。

- 如果球队输掉了比赛(例如比对手少进球)就 不得分 。

查询每个队的 team_idteam_name 和 num_points。

返回的结果根据 num_points 降序排序如果有两队积分相同那么这两队按 team_id 升序排序。

select

b.team_id,

any_value(b.team_name) team_name,

ifnull(sum(num_points),0) num_points

from(

select

host_team team_id,

if(host_goals>guest_goals,3,host_goals=guest_goals) num_points

from Matches

union all

select

guest_team team_id,

if(host_goals<guest_goals,3,host_goals=guest_goals) num_points

from Matches

) a right join teams b on a.team_id=b.team_id

group by b.team_id

order by num_points desc,b.team_id;

team_id team_name num_points

------- ----------- ------------

10 Leetcode FC 7

20 NewYork FC 3

50 Toronto FC 3

30 Atlanta FC 1

40 Chicago FC 0

区间统计

数据

type_md = """

| session_id | int |

| duration | int |

"""

sql_text = """

| session_id | duration |

| ---------- | -------- |

| 1 | 30 |

| 2 | 199 |

| 3 | 299 |

| 4 | 580 |

| 5 | 1000 |

"""

df = md2sql(sql_text, type_md, "Sessions", db_config)

print(df)

统计访问时长区间分别为 “[0-5>”, “[5-10>”, “[10-15>” 和 “15 or more” 单位分钟的会话数量。

select '[0-5>' bin, sum(duration<300) total from Sessions

union all

select '[5-10>', sum(300<=duration and duration<600) from Sessions

union all

select '[10-15>', sum(600<=duration and duration<900) from Sessions

union all

select '15 or more', sum(duration>=900) from Sessions;

bin total

---------- --------

[0-5> 3

[5-10> 1

[10-15> 0

15 or more 1

不使用union

select

a.bin,

count(b.bin) total

from(values row("[0-5>"),row("[5-10>"),row("[10-15>"),row("15 or more")) a(bin)

left join(

select

case

when duration<300 then '[0-5>'

when duration<600 then '[5-10>'

when duration<900 then '[10-15>' else '15 or more'

end `bin`

from sessions

) b using(`bin`)

group by a.bin;

数据

type_md = """

| account_id | int |

| income | int |

"""

sql_text = """

| account_id | income |

| ---------- | ------ |

| 3 | 108939 |

| 2 | 12747 |

| 8 | 87709 |

| 6 | 91796 |

"""

df = md2sql(sql_text, type_md, "Accounts", db_config)

print(df)

查询来报告每个工资类别的银行账户数量。 工资类别如下

"Low Salary"所有工资 严格低于20000美元。"Average Salary"包含 范围内的所有工资[$20000, $50000]。"High Salary"所有工资 严格大于50000美元。

结果表 必须 包含所有三个类别。 如果某个类别中没有帐户则报告 0 。

select "Low Salary" category,count(1) accounts_count from accounts where income<20000

union all

select "Average Salary" category,count(1) from accounts where income between 20000 and 50000

union all

select "High Salary" category,count(1) from accounts where income>50000;

或

select "Low Salary" category,sum(income<20000) accounts_count from accounts

union all

select "Average Salary" category,sum(income between 20000 and 50000) from accounts

union all

select "High Salary" category,sum(income>50000) from accounts;

category accounts_count

-------------- ----------------

Low Salary 1

Average Salary 0

High Salary 3

不使用union

select

a.bin,

count(b.bin) total

from(values row("Low Salary"),row("Average Salary"),row("High Salary")) a(bin)

left join(

select

case

when income<20000 then 'Low Salary'

when income<50000 then 'Average Salary'

else 'High Salary'

end `bin`

from accounts

) b using(`bin`)

group by a.bin;

基本字符串处理函数

这里我们展示lower/trim/left/upper/right/length/concat等函数的使用。

数据

type_md = """

| sale_id | int |

| product_name | varchar(20) |

| sale_date | date |

"""

sql_text = """

| sale_id | product_name | sale_date |

| ------- | ------------ | ---------- |

| 1 | LCPHONE | 2000-01-16 |

| 2 | LCPhone | 2000-01-17 |

| 3 | LcPhOnE | 2000-02-18 |

| 4 | LCKeyCHAiN | 2000-02-19 |

| 5 | LCKeyChain | 2000-02-28 |

| 6 | Matryoshka | 2000-03-31 |

"""

df = md2sql(sql_text, type_md, "Sales", db_config)

print(df)

写一个 SQL 语句报告每个月的销售情况

product_name是小写字母且不包含前后空格sale_date格式为('YYYY-MM')total是产品在本月销售的次数

返回结果以 product_name 升序 排列如果有排名相同再以 sale_date 升序 排列。

select

lower(trim(product_name)) product_name,

left(sale_date,7) sale_date,

count(1) total

from sales

group by 1,2

order by 1,2;

product_name sale_date total

------------ --------- --------

lckeychain 2000-02 2

lcphone 2000-01 2

lcphone 2000-02 1

matryoshka 2000-03 1

数据

type_md = """

| user_id | int |

| name | varchar(20) |

"""

sql_text = """

| user_id | name |

| ------- | ----- |

| 1 | aLice |

| 2 | bOB |

"""

df = md2sql(sql_text, type_md, "Users", db_config)

print(df)

修复名字使得只有第一个字符是大写的其余都是小写的。返回按 user_id 排序的结果表。

select

user_id,

concat(upper(left(name,1)),lower(right(name,length(name)-1))) name

from users

order by user_id;

user_id name

------- --------

1 Alice

2 Bob

数据

type_md = """

| person_id | int |

| name | varchar(20) |

| profession | ENUM('Doctor', 'Singer', 'Actor', 'Player', 'Engineer', 'Lawyer') |

"""

sql_text = """

| person_id | name | profession |

| --------- | ----- | ---------- |

| 1 | Alex | Singer |

| 3 | Alice | Actor |

| 2 | Bob | Player |

| 4 | Messi | Doctor |

| 6 | Tyson | Engineer |

| 5 | Meir | Lawyer |

"""

df = md2sql(sql_text, type_md, "Person", db_config)

print(df)

查询每个人的名字后面是他们职业的第一个字母用括号括起来。

返回按 person_id 降序排列 的结果表。

select person_id,concat(name,"(",left(profession,1),")") name

from person

order by person_id desc;

person_id name

--------- ----------

6 Tyson(E)

5 Meir(L)

4 Messi(D)

3 Alice(A)

2 Bob(P)

1 Alex(S)

字符串拼接与分组拼接

数据

type_md = """

| power | int |

| factor | int |

"""

sql_text = """

| power | factor |

| ----- | ------ |

| 2 | 1 |

| 1 | -4 |

| 0 | 2 |

"""

df = md2sql(sql_text, type_md, "Terms", db_config)

print(df)

要求将以上表拼接成+1X^2-4X+2=0形式的字符串。

我的思路是先按列拼接每行的组成元素

select

concat(if(factor>0,"+",""),factor) a,

if(power>0,"X","") b,

if(power>1,concat("^",power),"") c

from terms;

a b c

------ ------ --------

+1 X ^2

-4 X

+2

然后整体拼接

select

concat(group_concat(a,b,c order by power desc separator ""),"=0") equation

from(

select

power,

concat(if(factor>0,"+",""),factor) a,

if(power>0,"X","") b,

if(power>1,concat("^",power),"") c

from terms

) a;

equation

--------------

+1X^2-4X+2=0

group_concat内部需要根据power排序所以子查询中增加power字段separator指定了连接符。

case when写法

select

concat(group_concat(a,b order by power desc separator ""),"=0") equation

from(

select

power,

concat(if(factor>0,"+",""),factor) a,

case power

when 0 then ""

when 1 then "X"

else concat('X^',power)

end b

from terms

) a;

正则表达式

数据

type_md = """

| user_id | int |

| name | varchar(20) |

| mail | varchar(100) |

"""

sql_text = """

| user_id | name | mail |

| ------- | --------- | ----------------------- |

| 1 | Winston | winston@leetcode.com |

| 2 | Jonathan | jonathanisgreat |

| 3 | Annabelle | bella-@leetcode.com |

| 4 | Sally | sally.come@leetcode.com |

| 5 | Marwan | quarz#2020@leetcode.com |

| 6 | David | david69@gmail.com |

| 7 | Shapiro | .shapo@leetcode.com |

"""

df = md2sql(sql_text, type_md, "Users", db_config)

print(df)

查询拥有有效邮箱的用户。

有效的邮箱包含符合下列条件的前缀名和域名

- 前缀名是包含字母大写或小写、数字、下划线

'_'、句点'.'和横杠'-'的字符串。前缀名必须以字母开头。 - 域名是

'@leetcode.com'。

select * from users

where mail regexp "^[a-zA-Z][a-zA-Z0-9_.-]*@leetcode\\.com$";

user_id name mail

------- --------- -------------------------

1 Winston winston@leetcode.com

3 Annabelle bella-@leetcode.com

4 Sally sally.come@leetcode.com

数据

type_md = """

| patient_id | int |

| patient_name | varchar(20) |

| conditions | varchar(50) |

"""

sql_text = """

| patient_id | patient_name | conditions |

| ---------- | ------------ | ------------ |

| 1 | Daniel | YFEV COUGH |

| 2 | Alice | |

| 3 | Bob | DIAB100 MYOP |

| 4 | George | ACNE DIAB100 |

| 5 | Alain | DIAB201 |

"""

df = md2sql(sql_text, type_md, "Patients", db_config)

print(df)

查询患有 I 类糖尿病的患者的全部信息。I 类糖尿病的代码总是包含前缀 DIAB1 。

select * from Patients where conditions regexp "(^| )DIAB1";

patient_id patient_name conditions

---------- ------------ --------------

3 Bob DIAB100 MYOP

4 George ACNE DIAB100

正则表达式的语法可参考

正则表达式速查表与Python实操手册

https://xxmdmst.blog.csdn.net/article/details/112691043

数据

type_md = """

| topic_id | int |

| word | varchar(20) |

"""

sql_text = """

| topic_id | word |

| -------- | -------- |

| 1 | handball |

| 1 | football |

| 3 | WAR |

| 2 | Vaccine |

"""

df = md2sql(sql_text, type_md, "Keywords", db_config)

print(df)

type_md = """

| post_id | int |

| content | varchar(200) |

"""

sql_text = """

| post_id | content |

| ------- | ---------------------------------------------------------------------- |

| 1 | We call it soccer They call it football hahaha |

| 2 | Americans prefer basketball while Europeans love handball and football |

| 3 | stop the war and play handball |

| 4 | warning I planted some flowers this morning and then got vaccinated |

"""

df = md2sql(sql_text, type_md, "Posts", db_config)

print(df)

表: Keywords每一行都包含一个主题的 id 和一个用于表达该主题的词。可以用多个词来表达同一个主题也可以用一个词来表达多个主题。

表: Posts每一行都包含一个帖子的 ID 及其内容。内容仅由英文字母和空格组成。

编写一个 SQL 查询根据以下规则查找每篇文章的主题:

- 如果帖子没有来自任何主题的关键词那么它的主题应该是

"Ambiguous!"。 - 如果该帖子至少有一个主题的关键字其主题应该是其主题的 id 按升序排列并以逗号 ‘’ 分隔的字符串。字符串不应该包含重复的 id。

select

post_id,

ifnull(group_concat(distinct topic_id order by topic_id),"Ambiguous!") topic

from posts p left join keywords k

on content regexp concat("(^| )",word,"( |$)")

group by post_id;

post_id topic

------- ------------

1 1

2 1

3 1,3

4 Ambiguous!

5 1,2

like模糊匹配

like的匹配模式中有两种占位符

_匹配对应的单个字符

%匹配多个字符

针对上一题使用like实现需要考虑三种情况keyword居中起始末尾。参考解法

select

post_id,

ifnull(group_concat(distinct topic_id order by topic_id),"Ambiguous!") topic

from posts p left join keywords k

on content like concat(word," %")

or content like concat("% ",word," %")

or content like concat("% ",word)

group by post_id;

instr函数

针对上题还有种办法是使用instr函数确保文章首尾都有空格后则可以判断首尾带空格的词汇是否存在于文章中

select

post_id,

ifnull(group_concat(distinct topic_id order by topic_id),"Ambiguous!") topic

from posts p left join keywords k

on instr(concat(' ',content,' '),concat(' ',word,' '))>0

group by post_id;

with rollup的使用

Hive中支持 GROUPING SETS,GROUPING__ID,CUBE,ROLLUP等函数MySQL则只支持rollup。下面演示一下roll up的使用。

数据

type_md = """

| id | int |

| employee_id | int |

| amount | int |

| pay_date | date |

"""

sql_text = """

| id | employee_id | amount | pay_date |

|----|-------------|--------|------------|

| 1 | 1 | 9000 | 2017-03-31 |

| 2 | 2 | 6000 | 2017-03-31 |

| 3 | 3 | 10000 | 2017-03-31 |

| 4 | 1 | 7000 | 2017-02-28 |

| 5 | 2 | 6000 | 2017-02-28 |

| 6 | 3 | 8000 | 2017-02-28 |

"""

df = md2sql(sql_text, type_md, "salary", db_config)

print(df)

type_md = """

| employee_id | int |

| department_id | int |

"""

sql_text = """

| employee_id | department_id |

|-------------|---------------|

| 1 | 1 |

| 2 | 2 |

| 3 | 2 |

"""

df = md2sql(sql_text, type_md, "Employee", db_config)

print(df)

该题正常解法请查看最后一章的《部门与公司比较平均工资》

mysql支持rollup我们可以使用一个分组查询即可同时获取每个月部门和公司的平均工资

select

left(pay_date,7) pay_month,

department_id,

avg(amount) amount

from salary a join employee b

using(employee_id)

group by left(pay_date,7),department_id

with rollup;

结果

pay_month department_id amount

--------- ------------- -----------

2017-02 1 7000.0000

2017-02 2 7000.0000

2017-02 (NULL) 7000.0000

2017-03 1 9000.0000

2017-03 2 8000.0000

2017-03 (NULL) 8333.3333

(NULL) (NULL) 7666.6667

GROUPING() 函数可以检查超级聚合中聚合字段是否为空

select

left(pay_date,7) pay_month,

department_id,

avg(amount) amount,

grouping(left(pay_date,7)) e1,

grouping(department_id) e2

from salary a join employee b

using(employee_id)

group by left(pay_date,7),department_id

with rollup;

pay_month department_id amount e1 e2

--------- ------------- --------- ------ --------

2017-02 1 7000.0000 0 0

2017-02 2 7000.0000 0 0

2017-02 (NULL) 7000.0000 0 1

2017-03 1 9000.0000 0 0

2017-03 2 8000.0000 0 0

2017-03 (NULL) 8333.3333 0 1

(NULL) (NULL) 7666.6667 1 1

然后我们分解结果进行表连接

with cte as (

select

left(pay_date,7) pay_month,

department_id,

avg(amount) v

from salary a join employee b

using(employee_id)

group by left(pay_date,7),department_id

with rollup

)

select

a.pay_month,a.department_id,

case

when a.v>b.v then "higher"

when a.v<b.v then "lower"

else "same"

end comparison

from(

select * from cte where pay_month is not null and department_id is not null

) a join (

select * from cte where pay_month is not null and department_id is null

) b using(pay_month);

pay_month department_id comparison

--------- ------------- ------------

2017-02 1 same

2017-02 2 same

2017-03 1 higher

2017-03 2 lower

日期操作

date_sub函数

数据

type_md = """

| user_id | int |

| activity | enum('login','logout','jobs','groups','homepage') |

| activity_date | date |

"""

sql_text = """

| user_id | activity | activity_date |

+---------+----------+---------------+

| 1 | login | 2019-05-01 |

| 1 | homepage | 2019-05-01 |

| 1 | logout | 2019-05-01 |

| 2 | login | 2019-06-21 |

| 2 | logout | 2019-06-21 |

| 3 | login | 2019-01-01 |

| 3 | jobs | 2019-01-01 |

| 3 | logout | 2019-01-01 |

| 4 | login | 2019-06-21 |

| 4 | groups | 2019-06-21 |

| 4 | logout | 2019-06-21 |

| 5 | login | 2019-03-01 |

| 5 | logout | 2019-03-01 |

| 5 | login | 2019-06-21 |

| 5 | logout | 2019-06-21 |

"""

df = md2sql(sql_text, type_md, "Traffic", db_config)

print(df)

查询从今天起最多 90 天内每个日期该日期首次登录的用户数。假设今天是 2019-06-30.

思路

- 过滤出每个用户的登录数据

- 标记这是每个用户第几次登录

- 过滤第一次登录并判断登录时间是否在一个月之内

- 分组计数

select

login_date,count(user_id) user_count

from(

select

user_id,activity_date login_date,

row_number() over(partition by user_id order by activity_date) rn

from traffic

where activity="login"

) a

where a.rn=1 and login_date>=subdate('2019-06-30', 90)

group by login_date;

login_date user_count

---------- ------------

2019-05-01 1

2019-06-21 2

注意

subdate(‘2019-06-30’, 90)等价于date_sub(‘2019-06-30’, interval 90 day)

adddate(‘2019-06-30’, 90)等价于date_add(‘2019-06-30’, interval 90 day)

数据

type_md = """

| book_id | int |

| name | varchar(20) |

| available_from | date |

"""

sql_text = """

| book_id | name | available_from |

| ------- | ---------------- | -------------- |

| 1 | Kalila And Demna | 2010-01-01 |

| 2 | 28 Letters | 2012-05-12 |

| 3 | The Hobbit | 2019-06-10 |

| 4 | 13 Reasons Why | 2019-06-01 |

| 5 | The Hunger Games | 2008-09-21 |

"""

df = md2sql(sql_text, type_md, "books", db_config)

print(df)

type_md = """

| order_id | int |

| book_id | int |

| quantity | int |

| dispatch_date | date |

"""

sql_text = """

| order_id | book_id | quantity | dispatch_date |

| -------- | ------- | -------- | ------------- |

| 1 | 1 | 2 | 2018-07-26 |

| 2 | 1 | 1 | 2018-11-05 |

| 3 | 3 | 8 | 2019-06-11 |

| 4 | 4 | 6 | 2019-06-05 |

| 5 | 4 | 5 | 2019-06-20 |

| 6 | 5 | 9 | 2009-02-02 |

| 7 | 5 | 8 | 2010-04-13 |

"""

df = md2sql(sql_text, type_md, "Orders", db_config)

print(df)

筛选出过去一年中订单总量 少于10本 的 书籍 。

注意不考虑 上架available from距今 不满一个月 的书籍。并且 假设今天是 2019-06-23 。

首先我们查询每本书过去一年的订单

select

a.book_id,name,available_from,quantity,dispatch_date

from books a left join orders b

on a.book_id=b.book_id and dispatch_date>=date_sub('2019-06-23', interval 1 year)

book_id name available_from quantity dispatch_date

------- ---------------- -------------- -------- ---------------

1 Kalila And Demna 2010-01-01 1 2018-11-05

1 Kalila And Demna 2010-01-01 2 2018-07-26

2 28 Letters 2012-05-12 (NULL) (NULL)

3 The Hobbit 2019-06-10 8 2019-06-11

4 13 Reasons Why 2019-06-01 5 2019-06-20

4 13 Reasons Why 2019-06-01 6 2019-06-05

5 The Hunger Games 2008-09-21 (NULL) (NULL)

然后过滤掉上架不满一个月的书籍

select

a.book_id,name,available_from,quantity,dispatch_date

from books a left join orders b

on a.book_id=b.book_id and dispatch_date>=date_sub('2019-06-23', interval 1 year)

where available_from <= date_sub('2019-06-23', interval 1 month);

最终就可以找出小众书籍

select

a.book_id,

any_value(a.name) `name`

from books a left join orders b

on a.book_id=b.book_id and dispatch_date>=date_sub('2019-06-23', interval 1 year)

where available_from <= date_sub('2019-06-23', interval 1 month)

group by a.book_id

having ifnull(sum(quantity),0)<10;

datediff函数

上面的问题同样可以使用datediff函数来进行判断datediff用于计算两个日期之间相差的天数。

数据

type_md = """

| user_id | int |

| session_id | int |

| activity_date | date |

| activity_type | enum('open_session', 'end_session', 'scroll_down', 'send_message') |

"""

sql_text = """

| user_id | session_id | activity_date | activity_type |

| ------- | ---------- | ------------- | ------------- |

| 1 | 1 | 2019-07-20 | open_session |

| 1 | 1 | 2019-07-20 | scroll_down |

| 1 | 1 | 2019-07-20 | end_session |

| 2 | 4 | 2019-07-20 | open_session |

| 2 | 4 | 2019-07-21 | send_message |

| 2 | 4 | 2019-07-21 | end_session |

| 3 | 2 | 2019-07-21 | open_session |

| 3 | 2 | 2019-07-21 | send_message |

| 3 | 2 | 2019-07-21 | end_session |

| 3 | 5 | 2019-07-21 | open_session |

| 3 | 5 | 2019-07-21 | scroll_down |

| 3 | 5 | 2019-07-21 | end_session |

| 4 | 3 | 2019-06-25 | open_session |

| 4 | 3 | 2019-06-25 | end_session |

"""

df = md2sql(sql_text, type_md, "Activity", db_config)

print(df)

查询以查找截至 2019-07-27含的 30 天内每个用户的平均会话数四舍五入到小数点后两位。只统计那些会话期间用户至少进行一项活动的有效会话。

总会话数 除以 总用户数即可得到每个用户的平均会话数

select

round(

ifnull(

count(distinct session_id)/count(distinct user_id)

,0)

,2) average_sessions_per_user

from activity

where datediff("2019-07-27",activity_date)<30;

timestampdiff函数

语法timestampdiff(unit, begin, end)

unit支持的参数

- 秒second

- 分钟minute

- 小时hour

- 天day

- 周week

- 月month

- 季quarter

- 年year

相对于datediff函数timestampdiff支持任意单位。

数据

type_md = """

| employee_id | int |

| needed_hours | int |

"""

sql_text = """

| employee_id | needed_hours |

| ----------- | ------------ |

| 1 | 20 |

| 2 | 12 |

| 3 | 2 |

"""

df = md2sql(sql_text, type_md, "Employees", db_config)

print(df)

type_md = """

| employee_id | int |

| in_time | datetime |

| out_time | datetime |

"""

sql_text = """

| employee_id | in_time | out_time |

| ----------- | ------------------- | ------------------- |

| 1 | 2022-10-01 09:00:00 | 2022-10-01 17:00:00 |

| 1 | 2022-10-06 09:05:04 | 2022-10-06 17:09:03 |

| 1 | 2022-10-12 23:00:00 | 2022-10-13 03:00:01 |

| 2 | 2022-10-29 12:00:00 | 2022-10-29 23:58:58 |

"""

df = md2sql(sql_text, type_md, "Logs", db_config)

print(df)

表: Employees每一行都包含员工的 id 和他们获得工资所需的最低工作时数。employee_id 是该表的主键。

表: Logs每一行都显示了员工的工作时间。in_time 是员工开始工作的时间out_time 是员工结束工作的时间。out_time 可以是 in_time 之后的一天意味着该员工在午夜之后工作。

个员工每个月必须工作一定的小时数。员工在工作段中工作。员工工作的小时数可以通过员工在所有工作段中工作的分钟数的总和来计算。每个工作段的分钟数是四舍五入的。

- 例如如果员工在一个时间段中工作了

51分2秒我们就认为它是52分钟。查询没有达到工作所需时间的员工的 id。

首先统计每个员工工作的分钟数和所需的最低分钟数

select

a.employee_id,

sum(ceil(timestampdiff(second,in_time,out_time)/60)) t,

any_value(needed_hours*60) needed_minutes

from employees a left join logs b using(employee_id)

group by a.employee_id

employee_id t needed_minutes

----------- ------ ----------------

1 1205 1200

2 719 720

3 (NULL) 120

然后找出不达标的员工

select

employee_id

from(

select

a.employee_id,

sum(ceil(timestampdiff(second,in_time,out_time)/60)) t,

any_value(needed_hours*60) needed_minutes

from employees a left join logs b using(employee_id)

group by a.employee_id

) a

where t is null or t<needed_minutes;

employee_id

-------------

2

3

weekday计算星期几

weekday对一个日期返回0-6的数字分别表示从周一到周日。

数据

type_md = """

| task_id | int |

| assignee_id | int |

| submit_date | date |

"""

sql_text = """

| task_id | assignee_id | submit_date |

| ------- | ----------- | ----------- |

| 1 | 1 | 2022-06-13 |

| 2 | 6 | 2022-06-14 |

| 3 | 6 | 2022-06-15 |

| 4 | 3 | 2022-06-18 |

| 5 | 5 | 2022-06-19 |

| 6 | 7 | 2022-06-19 |

"""

df = md2sql(sql_text, type_md, "Tasks", db_config)

print(df)

task_id 是此表的主键每一行都包含任务 ID、委托人 ID 和提交日期。

查询

- 在周末 (周六周日) 提交的任务的数量

weekend_cnt - 工作日内提交的任务数

working_cnt。

select

sum(weekday(submit_date) in (5,6)) weekend_cnt,

sum(weekday(submit_date) between 0 and 4) working_cnt

from tasks;

weekend_cnt working_cnt

----------- -------------

3 3

数据

type_md = """

| order_id | int |

| customer_id | int |

| order_date | date |

| item_id | varchar(20) |

| quantity | int |

"""

sql_text = """

| order_id | customer_id | order_date | item_id | quantity |

| -------- | ----------- | ---------- | ------- | -------- |

| 1 | 1 | 2020-06-01 | 1 | 10 |

| 2 | 1 | 2020-06-08 | 2 | 10 |

| 3 | 2 | 2020-06-02 | 1 | 5 |

| 4 | 3 | 2020-06-03 | 3 | 5 |

| 5 | 4 | 2020-06-04 | 4 | 1 |

| 6 | 4 | 2020-06-05 | 5 | 5 |

| 7 | 5 | 2020-06-05 | 1 | 10 |

| 8 | 5 | 2020-06-14 | 4 | 5 |

| 9 | 5 | 2020-06-21 | 3 | 5 |

"""

df = md2sql(sql_text, type_md, "Orders", db_config)

print(df)

type_md = """

| item_id | varchar(20) |

| item_name | varchar(20) |

| item_category | varchar(20) |

"""

sql_text = """

| item_id | item_name | item_category |

| ------- | -------------- | ------------- |

| 1 | LC Alg. Book | Book |

| 2 | LC DB. Book | Book |

| 3 | LC SmarthPhone | Phone |

| 4 | LC Phone 2020 | Phone |

| 5 | LC SmartGlass | Glasses |

| 6 | LC T-Shirt XL | T-shirt |

"""

df = md2sql(sql_text, type_md, "Items", db_config)

print(df)

查询 周内每天 每个商品类别下订购了多少单位返回结果 按商品类别排序 。

首先统计周内每天每类商品的销售额

select

item_category category,

weekday(order_date) week,

sum(ifnull(quantity,0)) q

from items left join orders using(item_id)

group by 1,2

category week q

-------- ------ --------

Book 4 10

Book 1 5

Book 0 20

Phone 6 10

Phone 2 5

Phone 3 1

Glasses 4 5

T-shirt (NULL) 0

然后进行透视得到结果

select

category,

sum(if(week=0,q,0)) Monday,

sum(if(week=1,q,0)) Tuesday,

sum(if(week=2,q,0)) Wednesday,

sum(if(week=3,q,0)) Thursday,

sum(if(week=4,q,0)) Friday,

sum(if(week=5,q,0)) Saturday,

sum(if(week=6,q,0)) Sunday

from(

select

item_category category,

weekday(order_date) week,

sum(ifnull(quantity,0)) q

from items left join orders using(item_id)

group by 1,2

) a

group by 1

order by 1;

category Monday Tuesday Wednesday Thursday Friday Saturday Sunday

-------- ------ ------- --------- -------- ------ -------- --------

Book 20 5 0 0 10 0 0

Glasses 0 0 0 0 5 0 0

Phone 0 0 5 1 0 0 10

T-shirt 0 0 0 0 0 0 0

date_format日期格式化

语法 DATE_FORMAT(date,format)

date参数是合法的日期。format规定日期/时间的输出格式。 可以使用的格式有

格式 描述

%a 缩写星期名

%b 缩写月名

%c 月数值

%D 带有英文前缀的月中的天

%d 月的天数值(00-31)

%e 月的天数值(0-31)

%f 微秒

%H 小时 (00-23)

%h 小时 (01-12)

%I 小时 (01-12)

%i 分钟数值(00-59)

%j 年的天 (001-366)

%k 小时 (0-23)

%l 小时 (1-12)

%M 月名

%m 月数值(00-12)

%p AM 或 PM

%r 时间12-小时hh:mm:ss AM 或 PM

%S 秒(00-59)

%s 秒(00-59)

%T 时间, 24-小时 (hh:mm:ss)

%U 周 (00-53) 星期日是一周的第一天

%u 周 (00-53) 星期一是一周的第一天

%V 周 (01-53) 星期日是一周的第一天与 %X 使用

%v 周 (01-53) 星期一是一周的第一天与 %x 使用

%W 星期名

%w 周的天 0=星期日, 6=星期六

%X 年其中的星期日是周的第一天4 位与 %V 使用

%x 年其中的星期一是周的第一天4 位与 %v 使用

%Y 年4 位

%y 年2 位

sql_txt = """

Create table If Not Exists Days (day date)

Truncate table Days

insert into Days (day) values ('2022-04-12')

insert into Days (day) values ('2021-08-09')

insert into Days (day) values ('2020-06-26')

"""

SQL_Schema_import(sql_txt)

将Days表中的每一个日期转化为"day_name, month_name day, year"格式的字符串。

select date_format(day,"%W, %M %e, %Y") day from days

day

-------------------------

Tuesday, April 12, 2022

Monday, August 9, 2021

Friday, June 26, 2020

日期区间拆分为年份

数据

type_md = """

| product_id | int |

| product_name | varchar(20) |

"""

sql_text = """

| product_id | product_name |

| ---------- | ------------ |

| 1 | LC Phone |

| 2 | LC T-Shirt |

| 3 | LC Keychain |

"""

df = md2sql(sql_text, type_md, "Product", db_config)

print(df)

type_md = """

| product_id | int |

| period_start | date |

| period_end | date |

| average_daily_sales | int |

"""

sql_text = """

| product_id | period_start | period_end | average_daily_sales |

| ---------- | ------------ | ---------- | ------------------- |

| 1 | 2019-01-25 | 2019-02-28 | 100 |

| 2 | 2018-12-01 | 2020-01-01 | 10 |

| 3 | 2019-12-01 | 2020-01-31 | 1 |

"""

df = md2sql(sql_text, type_md, "Sales", db_config)

print(df)

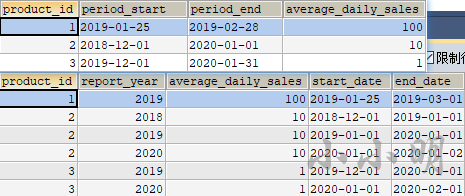

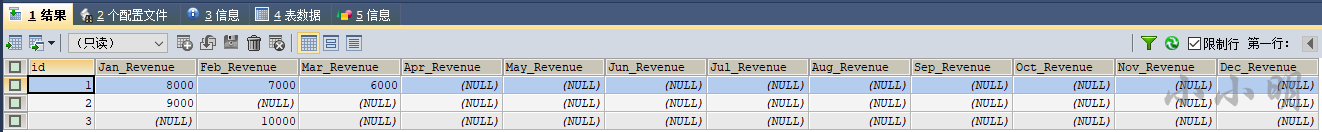

查询每个产品每年的总销售额并包含 product_id, product_name 以及 report_year 等信息。

销售年份介于 2018 年到 2020 年之间结果需要按 product_id 和 report_year 排序。

对于这题难点在于如何按年拆分日期首先我们先生成2018 年到 2020 年日期序列相关基础见生成序列并统计一节

select yr from(values row(2018), row(2019), row(2020)) yr_t(yr);

yr

--------

2018

2019

2020

使用makedate函数即可基于该年创建日期

select

yr,makedate(yr,1),makedate(yr+1,1)

from(values row(2018), row(2019), row(2020)) yr_t(yr);

yr makedate(yr,1) makedate(yr+1,1)

------ -------------- ------------------

2018 2018-01-01 2019-01-01

2019 2019-01-01 2020-01-01

2020 2020-01-01 2021-01-01

makedate的第二个参数为dayofyear表示第几天但每一年的总天数是不确定的所以为了表示2018年使用[2018-01-01,2019-01-01)。

下面我们将销售数据拆分到每一年

select

product_id,

yr report_year,

average_daily_sales,

period_start,period_end,

greatest(period_start,makedate(yr,1)) start_date,

least(adddate(period_end,1),makedate(yr+1,1)) end_date

from (values row(2018), row(2019), row(2020)) yr_t(yr)

join sales on yr between year(period_start) and year(period_end)

order by 1,2;

然后我们可以看到拆分效果

可以看到区间被完美的拆分到每个年份中。

最终结果

select

a.product_id,

b.product_name,

report_year,

average_daily_sales*datediff(end_date,start_date) total_amount

from(

select

product_id,

convert(yr,char) report_year,

average_daily_sales,

greatest(period_start,makedate(yr,1)) start_date,

least(adddate(period_end,1),makedate(yr+1,1)) end_date

from (values row(2018), row(2019), row(2020)) yr_t(yr)

join sales on yr between year(period_start) and year(period_end)

) a join product b using(product_id)

order by product_id,report_year;

product_id product_name report_year total_amount

---------- ------------ ----------- --------------

1 LC Phone 2019 3500

2 LC T-Shirt 2018 310

2 LC T-Shirt 2019 3650

2 LC T-Shirt 2020 10

3 LC Keychain 2019 31

3 LC Keychain 2020 31

注意convert(yr,char)是因为原题要求报告年份为字符串类型。

窗口函数

排名函数

数据

type_md = """

+-------------+---------+

| Column Name | Type |

+-------------+---------+

| id | int |

| score | decimal(10,2) |

+-------------+---------+

"""

sql_text = """

+----+-------+

| id | score |

+----+-------+

| 1 | 3.50 |

| 2 | 3.65 |

| 3 | 4.00 |

| 4 | 3.85 |

| 5 | 4.00 |

| 6 | 3.65 |

+----+-------+

"""

df = md2sql(sql_text, type_md, "Scores", db_config)

print(df.to_markdown(index=False))

注意明显需要保留2位小数所以需要给decimal类型指定长度手工将decimal修改为decimal(10,2)

看看四种排名窗口的效果

select

*,

row_number() over(order by score) rn1,

rank() over(order by score) rn2,

dense_rank() over(order by score) rn3,

ntile(2) over(order by score) rn4,

ntile(4) over(order by score) rn5

from scores;

结果

id score rn1 rn2 rn3 rn4 rn5

------ ------ ------ ------ ------ ------ --------

1 3.50 1 1 1 1 1

2 3.65 2 2 2 1 1

6 3.65 3 2 2 1 2

4 3.85 4 4 3 2 2

3 4.00 5 5 4 2 3

5 4.00 6 5 4 2 4

解释

- row_number()会保持序号递增不重复相同数值按出现顺序排名。

- rank()相同数值排名相同在名次中会留下空位。

- dense_rank()相同数值排名相同在名次中不会留下空位。

- ntile(group_num)将所有记录分成group_num个组每组序号一样。如果切片不均匀默认增加前面切片的分布。

注意排名函数均不支持WINDOW子句。即ROWS BETWEEN语句

还有两种不常用的排名函数

select

*,

round(cume_dist() over(order by score),2) rn1,

rank() over(order by score) `rank`,

round(percent_rank() over(order by score),2) rn2

from scores;

结果

id score rn1 rank rn2

------ ------ ------ ------ --------

1 3.50 0.17 1 0

2 3.65 0.5 2 0.2

6 3.65 0.5 2 0.2

4 3.85 0.67 4 0.6

3 4.00 1 5 0.8

5 4.00 1 5 0.8

解释

- CUME_DIST小于等于当前值的行数/分组内总行数

- PERCENT_RANK(分组内当前行的RANK值-1)/(分组内总行数-1)

偏移分析窗口函数

偏移分析函数的基本用法

LAG,LEAD,FIRST_VALUE,LAST_VALUE这四个窗口函数属于偏移分析函数不支持WINDOW子句。

LAG(col,n,DEFAULT) 用于统计窗口内往上第n行值

第一个参数为列名第二个参数为往上第n行可选默认为1第三个参数为默认值当往上第n行为NULL时候取默认值如不指定则为NULL。

LEAD(col,n,DEFAULT) 用于统计窗口内往下第n行值

第一个参数为列名第二个参数为往下第n行可选默认为1第三个参数为默认值当往下第n行为NULL时候取默认值如不指定则为NULL。

FIRST_VALUE取分组内排序后截止到当前行第一个值。

LAST_VALUE取分组内排序后截止到当前行最后一个值。

在使用偏移分析函数的过程中要特别注意ORDER BY子句。

数据

type_md = """

| id | int |

| recordDate | date |

| temperature | int |

"""

sql_text = """

| id | recordDate | temperature |

| -- | ---------- | ----------- |

| 1 | 2015-01-01 | 10 |

| 2 | 2015-01-02 | 25 |

| 3 | 2015-01-03 | 20 |

| 4 | 2015-01-04 | 30 |

"""

df = md2sql(sql_text, type_md, "Weather", db_config)

print(df)

编写一个 SQL 查询查找与昨天的日期相比温度更高的所有日期的 id 。

select

id

from(

select id,Temperature,lag(Temperature) over(order by recordDate) last_t from Weather

) a

where a.Temperature>a.last_t;

id

--------

2

4

数据

type_md = """

| user_id | int |

| time_stamp | datetime |

| action | ENUM('confirmed','timeout') |

"""

sql_text = """

| user_id | time_stamp | action |

| ------- | ------------------- | --------- |

| 3 | 2021-01-06 03:30:46 | timeout |

| 3 | 2021-01-06 03:37:45 | timeout |

| 7 | 2021-06-12 11:57:29 | confirmed |

| 7 | 2021-06-13 11:57:30 | confirmed |

| 2 | 2021-01-22 00:00:00 | confirmed |

| 2 | 2021-01-23 00:00:00 | timeout |

| 6 | 2021-10-23 14:14:14 | confirmed |

| 6 | 2021-10-24 14:14:13 | timeout |

"""

df = md2sql(sql_text, type_md, "Confirmations", db_config)

print(df)

Confirmations表每一行都表示 ID 为 user_id 的用户在 time_stamp 请求了确认消息并且该确认消息已被确认‘confirmed’或已过期‘timeout’。

查找在 24 小时窗口内含两次请求确认消息的用户的 ID。

可以先查询每个用户的下次确认时间

select

user_id,

time_stamp,

lead(time_stamp) over(partition by user_id order by time_stamp) next

from Confirmations

user_id time_stamp next

------- ------------------- ---------------------

2 2021-01-22 00:00:00 2021-01-23 00:00:00

2 2021-01-23 00:00:00 (NULL)

3 2021-01-06 03:30:46 2021-01-06 03:37:45

3 2021-01-06 03:37:45 (NULL)

6 2021-10-23 14:14:14 2021-10-24 14:14:13

6 2021-10-24 14:14:13 (NULL)

7 2021-06-12 11:57:29 2021-06-13 11:57:30

7 2021-06-13 11:57:30 (NULL)

然后判断两次相隔的时间是否在一天之内即可

select

distinct user_id

from(

select

user_id,

time_stamp,

lead(time_stamp) over(partition by user_id order by time_stamp) next

from Confirmations

) a

where next<=adddate(time_stamp,1);

user_id

---------

2

3

6

type_md = """

| user_id | int |

| visit_date | date |

"""

sql_text = """

| user_id | visit_date |

| ------- | ---------- |

| 1 | 2020-11-28 |

| 1 | 2020-10-20 |

| 1 | 2020-12-3 |

| 2 | 2020-10-5 |

| 2 | 2020-12-9 |

| 3 | 2020-11-11 |

"""

df = md2sql(sql_text, type_md, "UserVisits", db_config)

print(df)

假设今天的日期是 '2021-1-1' 。

编写 SQL 语句对于每个 user_id 求出每次访问及其下一个访问若该次访问是最后一次则为今天之间最大的空档期天数 window 。

返回结果表按用户编号 user_id 排序。

首先求出每次访问到下次访问的空档天数

select

user_id,visit_date,

lead(visit_date,1,"2021-1-1") over(partition by user_id order by visit_date) next_date,

datediff(lead(visit_date,1,"2021-1-1") over(partition by user_id order by visit_date),visit_date) w

from uservisits

user_id visit_date next_date w

------- ---------- ---------- --------

1 2020-10-20 2020-11-28 39

1 2020-11-28 2020-12-03 5

1 2020-12-03 2021-1-1 29

2 2020-10-05 2020-12-09 65

2 2020-12-09 2021-1-1 23

3 2020-11-11 2021-1-1 51

然后统计每个用户的最大空档天数

select

user_id,

max(w) biggest_window

from(

select

user_id,

datediff(lead(visit_date,1,"2021-1-1") over(partition by user_id order by visit_date),visit_date) w

from uservisits

) a

group by 1

order by 1;

user_id biggest_window

------- ----------------

1 39

2 65

3 51

统计分析函数

统计分析函数的基本用法

SUM、AVG、MIN、MAX这四个窗口函数属于统计分析函数支持WINDOW子句。

数据

type_md = """

| person_id | int |

| person_name | varchar(20) |

| weight | int |

| turn | int |

"""

sql_text = """

| person_id | person_name | weight | turn |

| --------- | ----------- | ------ | ---- |

| 5 | Alice | 250 | 1 |

| 4 | Bob | 175 | 5 |

| 3 | Alex | 350 | 2 |

| 6 | John Cena | 400 | 3 |

| 1 | Winston | 500 | 6 |

| 2 | Marie | 200 | 4 |

"""

df = md2sql(sql_text, type_md, "Queue", db_config)

print(df)

有一群人在等着上公共汽车。巴士有1000 公斤的重量限制所以可能会有一些人不能上。

查询 最后一个 能进入电梯且不超过重量限制的 person_name 。数据确保队列中第一位的人可以进入电梯不会超重。

首先计算每个人进入电梯后的累积重量

select

person_name,

sum(weight) over(order by turn) weight

from Queue;

person_name weight

----------- --------

Alice 250

Alex 600

John Cena 1000

Marie 1200

Bob 1375

Winston 1875

然后筛选并取最大

select

person_name

from(

select

person_name,

sum(weight) over(order by turn) weight

from Queue

) a

where weight<=1000

order by weight desc limit 1;

很明显John Cena是最后一个体重合适并进入电梯的人。

数据

type_md = """

| account_id | int |

| day | date |

| type | ENUM('Deposit','Withdraw') |

| amount | int |

"""

sql_text = """

| account_id | day | type | amount |

| ---------- | ---------- | -------- | ------ |

| 1 | 2021-11-07 | Deposit | 2000 |

| 1 | 2021-11-09 | Withdraw | 1000 |

| 1 | 2021-11-11 | Deposit | 3000 |

| 2 | 2021-12-07 | Deposit | 7000 |

| 2 | 2021-12-12 | Withdraw | 7000 |

"""

df = md2sql(sql_text, type_md, "Transactions", db_config)

print(df)

交易类型(type)字段包括了两种行为存入 (‘Deposit’), 取出(‘Withdraw’).

查询用户每次交易完成后的账户余额所有用户在进行交易前的账户余额都为0。数据保证所有交易行为后的余额不为负数。

返回的结果按照 账户account_id), 日期( day ) 进行升序排序 。

select

account_id,day,

sum(if(type="Deposit",amount,-amount)) over(partition by account_id order by day) balance

from transactions

order by 1,2;

account_id day balance

---------- ---------- ---------

1 2021-11-07 2000

1 2021-11-09 1000

1 2021-11-11 4000

2 2021-12-07 7000

2 2021-12-12 0

count也支持窗口函数

数据

type_md = """

| username | varchar(20) |

| activity | varchar(20) |

| startDate | Date |

| endDate | Date |

"""

sql_text = """

| username | activity | startDate | endDate |

| -------- | -------- | ---------- | ---------- |

| Alice | Travel | 2020-02-12 | 2020-02-20 |

| Alice | Dancing | 2020-02-21 | 2020-02-23 |

| Alice | Travel | 2020-02-24 | 2020-02-28 |

| Bob | Travel | 2020-02-11 | 2020-02-18 |

"""

df = md2sql(sql_text, type_md, "UserActivity", db_config)

print(df)

查询每一位用户 最近第二次 的活动如果用户仅有一次活动返回该活动

数据保证一个用户不能同时多项活动。

首先标记每个用户的第几次活动和总活动次数

select

*,

rank() over(partition by username order by startDate desc) rk,

count(1) over(partition by username) cnt

from UserActivity;

username activity startdate enddate rk cnt

-------- -------- ---------- ---------- ------ --------

Alice Travel 2020-02-24 2020-02-28 1 3

Alice Dancing 2020-02-21 2020-02-23 2 3

Alice Travel 2020-02-12 2020-02-20 3 3

Bob Travel 2020-02-11 2020-02-18 1 1

最后再过滤

select

username,activity,startDate,endDate

from(

select

*,

rank() over(partition by username order by startDate desc) rk,

count(1) over(partition by username) cnt

from UserActivity

) a

where a.rk=2 or a.cnt=1;

username activity startDate endDate

-------- -------- ---------- ------------

Alice Dancing 2020-02-21 2020-02-23

Bob Travel 2020-02-11 2020-02-18

数据

type_md = """

| caller_id | int |

| recipient_id | int |

| call_time | datetime |

"""

sql_text = """

| caller_id | recipient_id | call_time |

| --------- | ------------ | ------------------- |

| 8 | 4 | 2021-08-24 17:46:07 |

| 4 | 8 | 2021-08-24 19:57:13 |

| 5 | 1 | 2021-08-11 05:28:44 |

| 8 | 3 | 2021-08-17 04:04:15 |

| 11 | 3 | 2021-08-17 13:07:00 |

| 8 | 11 | 2021-08-17 22:22:22 |

"""

df = md2sql(sql_text, type_md, "Calls", db_config)

print(df)

(caller_id, recipient_id, call_time) 是Calls表的主键。

查询在任意一天的第一个电话和最后一个电话都是和同一个人的拨打者和接收者均记录。

首先标记每个通话者每天的的通话序号

select

u1,u2,date(call_time) dt,call_time,

row_number() over(partition by u1,date(call_time) order by call_time) rn,

count(1) over(partition by u1,date(call_time)) num

from(

select caller_id u1,recipient_id u2,call_time from calls

union all

select recipient_id,caller_id,call_time from calls

) a;

u1 u2 dt call_time rn num

------ ------ ---------- ------------------- ------ --------

1 5 2021-08-11 2021-08-11 05:28:44 1 1

3 8 2021-08-17 2021-08-17 04:04:15 1 2

3 11 2021-08-17 2021-08-17 13:07:00 2 2

4 8 2021-08-24 2021-08-24 17:46:07 1 2

4 8 2021-08-24 2021-08-24 19:57:13 2 2

5 1 2021-08-11 2021-08-11 05:28:44 1 1

8 3 2021-08-17 2021-08-17 04:04:15 1 2

8 11 2021-08-17 2021-08-17 22:22:22 2 2

8 4 2021-08-24 2021-08-24 17:46:07 1 2

8 4 2021-08-24 2021-08-24 19:57:13 2 2

11 3 2021-08-17 2021-08-17 13:07:00 1 2

11 8 2021-08-17 2021-08-17 22:22:22 2 2

然后后过滤出每个用户每天首次和最后一次通话的对象

select

u1,u2,dt

from(

select

u1,u2,date(call_time) dt,

row_number() over(partition by u1,date(call_time) order by call_time) rn,

count(1) over(partition by u1,date(call_time)) num

from(

select caller_id u1,recipient_id u2,call_time from calls

union all

select recipient_id,caller_id,call_time from calls

) a

) b

where rn=1 or rn=num;

u1 u2 dt

------ ------ ------------

1 5 2021-08-11

3 8 2021-08-17

3 11 2021-08-17

4 8 2021-08-24

4 8 2021-08-24

5 1 2021-08-11

8 3 2021-08-17

8 11 2021-08-17

8 4 2021-08-24

8 4 2021-08-24

11 3 2021-08-17

11 8 2021-08-17

最后找出某天某个用户的首次和最后一次通话对象一致的用户

select

distinct u1 user_id

from(

select

u1,u2,date(call_time) dt,

row_number() over(partition by u1,date(call_time) order by call_time) rn,

count(1) over(partition by u1,date(call_time)) num

from(

select caller_id u1,recipient_id u2,call_time from calls

union all

select recipient_id,caller_id,call_time from calls

) a

) b

where rn=1 or rn=num

group by u1,dt

having count(distinct u2)=1;

user_id

---------

1

4

5

8

window子句ROWS与RANGE的区别

window子句

如果指定ORDER BY不指定ROWS BETWEEN默认为从起点到当前行相当于

ROWS BETWEEN UNBOUNDED PRECEDING AND CURRENT ROW

或

ROWS UNBOUNDED PRECEDING

如果不指定ORDER BY则将分组内所有值累加相当于

ROWS BETWEEN UNBOUNDED PRECEDING AND UNBOUNDED FOLLOWING

分组内当前行+往前3行

ROWS BETWEEN 3 PRECEDING AND CURRENT ROW

或

ROWS 3 PRECEDING

分组内往前3行到往后1行

ROWS BETWEEN 3 PRECEDING AND 1 FOLLOWING

分组内当前行+往后所有行

ROWS BETWEEN CURRENT ROW AND UNBOUNDED FOLLOWING

WINDOW子句各项含义

- PRECEDING往前

- FOLLOWING往后

- CURRENT ROW当前行

- UNBOUNDED起点

- UNBOUNDED PRECEDING表示从前面的起点

- UNBOUNDED FOLLOWING表示到后面的终点

ROWS是以实际的数据行排序RANGE是逻辑上的排序例如order by指定月份字段时如果存在缺失月份也会被考虑进去。

数据

type_md = """

| customer_id | int |

| name | varchar(20) |

| visited_on | date |

| amount | int |

"""

sql_text = """

| customer_id | name | visited_on | amount |

| ----------- | ------- | ---------- | ------ |

| 1 | Jhon | 2019-01-01 | 100 |

| 2 | Daniel | 2019-01-02 | 110 |

| 3 | Jade | 2019-01-03 | 120 |

| 4 | Khaled | 2019-01-04 | 130 |

| 5 | Winston | 2019-01-05 | 110 |

| 6 | Elvis | 2019-01-06 | 140 |

| 7 | Anna | 2019-01-07 | 150 |

| 8 | Maria | 2019-01-08 | 80 |

| 9 | Jaze | 2019-01-09 | 110 |

| 1 | Jhon | 2019-01-10 | 130 |

| 3 | Jade | 2019-01-10 | 150 |

"""

df = md2sql(sql_text, type_md, "Customer", db_config)

print(df)

(customer_id, visited_on) 是该表的主键,

visited_on 表示 customer_id 的顾客访问餐馆的日期amount 表示消费总额。

现在需要分析营业额变化增长每天至少有一位顾客。

查询计算以 7 天某日期 + 该日期前的 6 天为一个窗口的顾客消费平均值。average_amount 要保留两位小数查询结果按 visited_on 排序。

首先查询每天的营业额以及近7天的累积营业额

select

visited_on,

sum(amount) amount,

sum(sum(amount)) over(order by visited_on rows 6 preceding) accu_amount,

rank() over(order by visited_on) rn

from customer

group by visited_on

visited_on amount accu_amount rn

---------- ------ ----------- --------

2019-01-01 100 100 1

2019-01-02 110 210 2

2019-01-03 120 330 3

2019-01-04 130 460 4

2019-01-05 110 570 5

2019-01-06 140 710 6

2019-01-07 150 860 7

2019-01-08 80 840 8

2019-01-09 110 840 9

2019-01-10 280 1000 10

然后计算7日平均

select

visited_on,amount,

round(amount/least(rn,7),2) average_amount

from(

select

visited_on,

sum(sum(amount)) over(order by visited_on rows 6 preceding) amount,

rank() over(order by visited_on) rn

from customer

group by visited_on

) a;

visited_on amount average_amount

---------- ------ ----------------

2019-01-01 100 100.00

2019-01-02 210 105.00

2019-01-03 330 110.00

2019-01-04 460 115.00

2019-01-05 570 114.00

2019-01-06 710 118.33

2019-01-07 860 122.86

2019-01-08 840 120.00

2019-01-09 840 120.00

2019-01-10 1000 142.86

不过题目只需要具备7天窗口的数据

select

visited_on,amount,

round(amount/7,2) average_amount

from(

select

visited_on,

sum(sum(amount)) over(order by visited_on rows 6 preceding) amount,

rank() over(order by visited_on) rn

from customer

group by visited_on

) a

where rn>=7;

visited_on amount average_amount

---------- ------ ----------------

2019-01-07 860 122.86

2019-01-08 840 120.00

2019-01-09 840 120.00

2019-01-10 1000 142.86

数据

type_md = """

| id | int |

| Month | int |

| Salary | int |

"""

sql_text = """

| id | month | salary |

| -- | ----- | ------ |

| 1 | 1 | 20 |

| 2 | 1 | 20 |

| 1 | 2 | 30 |

| 2 | 2 | 30 |

| 3 | 2 | 40 |

| 1 | 3 | 40 |

| 3 | 3 | 60 |

| 1 | 4 | 60 |

| 3 | 4 | 70 |

| 1 | 7 | 90 |

| 1 | 8 | 90 |

"""

df = md2sql(sql_text, type_md, "Employee", db_config)

print(df)

题目要求查询每个员工除最近一个月即最大月之外剩下每个月的近三个月的累计薪水不足三个月也要计算。

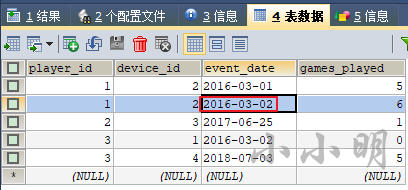

我们看看rows和range的区别

select

id,month,salary,

sum(salary) over(partition by id order by month rows 2 preceding) s1,

sum(salary) over(partition by id order by month range 2 preceding) s2

from employee;

可以清楚看到range是逻辑上窗口不连续的月默认为空rows则严格按照数据行为准。

结果要求按 Id 升序 Month 降序显示。

那么就非常简单了

select

id,month,salary

from(

select

id,month,

sum(salary) over(partition by id order by month rows 2 preceding) salary,

max(month) over(partition by id) mn

from employee

) a

where month<>mn

order by id,month desc;

结果

id month salary

------ ------ --------

1 1 20

1 2 50

1 3 90

1 4 130

1 7 190

2 1 20

3 2 40

3 3 100

或者使用rank过滤最近一个月

select

id,month,salary

from(

select

id,month,

sum(salary) over(partition by id order by month rows 2 preceding) salary,

rank() over(partition by id order by month desc) rk

from employee

) a

where rk>1

order by id,month desc;

窗口函数可以执行在group by之后

示例574. 当选者

数据

type_md = """

| id | int |

| name | varchar(20) |

"""

sql_text = """

| id | name |

| -- | ---- |

| 1 | A |

| 2 | B |

| 3 | C |

| 4 | D |

| 5 | E |

"""

df = md2sql(sql_text, type_md, "Candidate", db_config)

print(df)

type_md = """

| id | int |

| candidateId | int |

"""

sql_text = """

| id | candidateId |

| -- | ----------- |

| 1 | 2 |

| 2 | 4 |

| 3 | 3 |

| 4 | 2 |

| 5 | 5 |

"""

df = md2sql(sql_text, type_md, "Vote", db_config)

print(df)

Candidate表示候选对象的id和名称的信息Vote每一行决定了在选举中获得第i张选票的候选人。

现在要求查询出获得最多选票的候选人我们可以先查询出每个候选人获取的选票

select

name, count(b.id) cnt

from Candidate a join Vote b on a.id=b.candidateid

group by candidateid;

name cnt

------ --------

B 2

D 1

C 1

E 1

然后我们可以直接在聚合函数的基础上使用窗口函数而无需使用子查询

select

name, rank() over(order by count(b.id) desc) rn

from Candidate a join Vote b on a.id=b.candidateid

group by candidateid;

name rn

------ --------

B 1

D 2

C 2

E 2

但是mysql的having不支持对窗口函数的结果进行操作此时我们必须使用子查询得到结果

select name from(

select

name, rank() over(order by count(b.id) desc) rn

from Candidate a join Vote b on a.id=b.candidateid

group by candidateid

) t

where rn=1;

name

--------

B

当然对于本题而言题目限制了测试数据能够确保确保 只有一个候选人赢得选举。那么更简单的写法是

select

name

from Candidate a join Vote b on a.id=b.candidateid

group by candidateid

order by count(b.id) desc limit 1;

但是当可能存在多个候选人同票获得第一的情况则只能使用窗口函数。

数据

type_md = """

| order_id | int |

| customer_id | int |

| order_date | date |

| price | int |

"""

sql_text = """

| order_id | customer_id | order_date | price |

| -------- | ----------- | ---------- | ----- |

| 1 | 1 | 2019-07-01 | 1100 |

| 2 | 1 | 2019-11-01 | 1200 |

| 3 | 1 | 2020-05-26 | 3000 |

| 4 | 1 | 2021-08-31 | 3100 |

| 5 | 1 | 2022-12-07 | 4700 |

| 6 | 2 | 2015-01-01 | 700 |

| 7 | 2 | 2017-11-07 | 1000 |

| 8 | 3 | 2017-01-01 | 900 |

| 9 | 3 | 2018-11-07 | 900 |

| 11 | 6 | 2021-04-16 | 6700 |

| 10 | 6 | 2019-10-11 | 5400 |

| 23 | 6 | 2020-09-21 | 4700 |

| 17 | 6 | 2022-05-13 | 2100 |

| 18 | 6 | 2019-04-21 | 9600 |

| 15 | 6 | 2020-12-27 | 900 |

"""

df = md2sql(sql_text, type_md, "Orders", db_config)

print(df)

order_id 是该表的主键。每行包含订单的 id、订购该订单的客户 id、订单日期和价格。

查询 总购买量 每年严格增加的客户 id。

- 客户在一年内的 总购买量 是该年订单价格的总和。如果某一年客户没有下任何订单我们认为总购买量为

0。 - 对于每个客户要考虑的第一个年是他们 第一次下单 的年份。

- 对于每个客户要考虑的最后一年是他们 最后一次下单 的年份。

首先统计每个客户每年和上一年的总购买量

select

customer_id,

lag(year(order_date)) over(partition by customer_id order by year(order_date)) ly,

year(order_date) y,

lag(sum(price)) over(partition by customer_id order by year(order_date)) lp,

sum(price) price

from orders

group by customer_id,year(order_date);

customer_id ly y lp price

----------- ------ ------ ------ --------

1 (NULL) 2019 (NULL) 2300

1 2019 2020 2300 3000

1 2020 2021 3000 3100

1 2021 2022 3100 4700

2 (NULL) 2015 (NULL) 700

2 2015 2017 700 1000

3 (NULL) 2017 (NULL) 900

3 2017 2018 900 900

6 (NULL) 2019 (NULL) 15000

6 2019 2020 15000 5600

6 2020 2021 5600 6700

6 2021 2022 6700 2100

要找出每年严格增加的客户我们可先找出某年未严格增加的客户

select

distinct customer_id

from(

select

customer_id,

lag(year(order_date)) over(partition by customer_id order by year(order_date)) ly,

year(order_date) y,

lag(sum(price)) over(partition by customer_id order by year(order_date)) lp,

sum(price) price

from orders

group by customer_id,year(order_date)

) a

where ly is not null and (ly+1!=y or lp>=price);

customer_id

-------------

2

3

6

然后一个外连接过滤得到答案

select

distinct customer_id

from orders left join (

select

distinct customer_id

from(

select

customer_id,

lag(year(order_date)) over(partition by customer_id order by year(order_date)) ly,

year(order_date) y,

lag(sum(price)) over(partition by customer_id order by year(order_date)) lp,

sum(price) price

from orders

group by customer_id,year(order_date)

) a

where ly is not null and (ly+1!=y or lp>=price)

) b using(customer_id)

where b.customer_id is null;

customer_id

-------------

1

排名函数执行在order by上

数据

type_md = """

| user_id | int |

| gender | varchar(20) |

"""

sql_text = """

| user_id | gender |

| ------- | ------ |

| 4 | male |

| 7 | female |

| 2 | other |

| 5 | male |

| 3 | female |

| 8 | male |

| 6 | other |

| 1 | other |

| 9 | female |

"""

df = md2sql(sql_text, type_md, "Genders", db_config)

print(df)

user_id 是该表的主键。gender 的值是 ‘female’, ‘male’,‘other’ 之一。该表中的每一行都包含用户的 ID 及其性别。

重新排列 Genders 表使行按顺序在 'female', 'other' 和 'male' 之间交替。同时每种性别按照 user_id 升序进行排序。

select

user_id,gender

from genders

order by

rank() over(partition by gender order by user_id),

rank() over(order by length(gender) desc);

user_id gender

------- --------

3 female

1 other

4 male

7 female

2 other

5 male

9 female

6 other

8 male

由于要求的 'female', 'other' 和 'male' 的交替顺序具备字符长度递减的特征所以我们可以使用长度排序若不具备这样的特征则只能使用if或case when进行映射

select

user_id,gender

from genders

order by

rank() over(partition by gender order by user_id),

if(gender="male",2,if(gender="other",1,0));

或

select

user_id,gender

from genders

order by

rank() over(partition by gender order by user_id),

case gender

when "female" then 0

when "other" then 1

else 2

end;

排名函数实现多列分别排序

数据

type_md = """

| first_col | int |

| second_col | int |

"""

sql_text = """

| first_col | second_col |

| --------- | ---------- |

| 4 | 2 |

| 2 | 3 |

| 3 | 1 |

| 1 | 4 |

"""

df = md2sql(sql_text, type_md, "Data", db_config)

print(df)

编写 SQL 使

first_col按照 升序 排列。second_col按照 降序 排列。

思路给要排序的多列分别生成编号然后对编号进行表连接。

with cte as(

select

first_col,second_col,

row_number() over(order by first_col) rk1,

row_number() over(order by second_col desc) rk2

from data

)

select a.first_col,b.second_col

from cte a join cte b on a.rk1=b.rk2

order by a.rk1;

first_col second_col

--------- ------------

1 4

2 3

3 2

4 1

窗口函数相减为负数会报错

这是因为窗口函数的结果为无符号整数类型UNSIGNED这时应该使用cast(expression as data_type)将其转换为整数类型常见的类型有

- 可带参数 : CHAR()

- 日期 : DATE

- 时间: TIME

- 日期时间型 : DATETIME

- 浮点数 : DECIMAL

- 整数 : SIGNED

- 无符号整数 : UNSIGNED

数据

type_md = """

| team_id | int |

| name | varchar(20) |

| points | int |

"""

sql_text = """

| team_id | name | points |

| ------- | ----------- | ------ |

| 3 | Algeria | 1431 |

| 1 | Senegal | 2132 |

| 2 | New Zealand | 1402 |

| 4 | Croatia | 1817 |

"""

df = md2sql(sql_text, type_md, "TeamPoints", db_config)

print(df)

type_md = """

| team_id | int |

| points_change | int |

"""

sql_text = """

| team_id | points_change |

| ------- | ------------- |

| 3 | 399 |

| 2 | 0 |

| 4 | 13 |

| 1 | -22 |

"""

df = md2sql(sql_text, type_md, "PointsChange", db_config)

print(df)

表TeamPointsteam_id 是主键每一行代表一支国家队在全球排名中的得分。没有两支队伍代表同一个国家。

表PointsChangeteam_id 是这张表的主键。每一行代表一支国家队在世界排名中的得分的变化。0:代表分数没有改变正数:代表分数增加负数:代表分数降低。TeamPoints 表中出现的每一个 team_id 均会在这张表中出现。

每支国家队的分数应根据其相应的 points_change 进行更新。查询来计算在分数更新后每个队伍的全球排名的变化。

首先查询每支国家队之前的排名和分数变化的排名

select

a.team_id,a.name,

rank() over(order by points desc,name) rn1,

rank() over(order by points+points_change desc,name) rn2

from teampoints a join pointschange b using(team_id);

team_id name rn1 rn2

------- ----------- ------ --------

1 Senegal 1 1

3 Algeria 3 2

4 Croatia 2 3

2 New Zealand 4 4

由于rank函数的返回值是unsigned类型如果我们直接使用rn1-rn2直接相减会得到错误BIGINT UNSIGNED value is out of range

此时我们需要转换类型后再相减

select

team_id,name,

cast(rn1 as signed)-cast(rn2 as signed) rank_diff

from(

select

a.team_id,a.name,

rank() over(order by points desc,name) rn1,

rank() over(order by points+points_change desc,name) rn2

from teampoints a join pointschange b using(team_id)

) c;

team_id name rank_diff

------- ----------- -----------

1 Senegal 0

3 Algeria 1

4 Croatia -1

2 New Zealand 0

顺利得到最终答案。

判断连续性

是否连续相等

数据

type_md = """

+-------------+---------+

| Column Name | Type |

+-------------+---------+

| id | int |

| num | varchar(20) |

+-------------+---------+

"""

sql_text = """

+----+-----+

| Id | Num |

+----+-----+

| 1 | 1 |

| 2 | 1 |

| 3 | 1 |

| 4 | 2 |

| 5 | 1 |

| 6 | 2 |

| 7 | 2 |

+----+-----+

"""

df = md2sql(sql_text, type_md, "Logs", db_config)

print(df)

lag+sum判断连续性

select

num,count(1) cnt

from(

select

id, num,

sum(t) over(order by id) g

from(

select

id, num,

num!=lag(num,1,0) over(order by id) t

from logs

) a

) b

group by num,g;

结果

num cnt

------ --------

1 3

2 1

1 1

2 2

双row_number序号排名判断连续性

select

num,count(1) cnt

from(

select

id, num,

row_number() over(order by id) -

row_number() over(partition by num order by id) g

from logs

) a

group by num,g;

与上述结果一致。

题目要求查找所有至少连续出现三次的数字只需

select

distinct num ConsecutiveNums

from(

select

id, num,

row_number() over(order by id) -

row_number() over(partition by num order by id) g

from logs

) a

group by num,g

having count(1)>=3;

显然后者更简单。

数据

type_md = """

| seat_id | int |

| free | smallint |

"""

sql_text = """

| seat_id | free |

+---------+------+

| 1 | 1 |

| 2 | 0 |

| 3 | 1 |

| 4 | 1 |

| 5 | 1 |

"""

df = md2sql(sql_text, type_md, "Cinema", db_config)

print(df)

每一行表示第i个座位是否空闲。1表示空闲0表示被占用。

查询所有连续可用的座位按 seat_id 升序排序

select

seat_id

from(

select

seat_id,count(1) over(partition by rn1-rn2) g

from(

select

seat_id,free,

row_number() over(order by seat_id) rn1,

row_number() over(partition by free order by seat_id) rn2

from cinema

) a where free=1

) b

where g>1 order by seat_id;

当然针对本题只需判断一次连续简易解法为

select

distinct a.seat_id

from cinema a join cinema b

on abs(a.seat_id-b.seat_id)=1

and a.free=1 and b.free=1

order by a.seat_id;

seat_id

---------

3

4

5

是否连续为某个固定值

数据

type_md = """

| player_id | int |

| match_day | date |

| result | enum('Win','Draw','Lose') |

"""

sql_text = """

| player_id | match_day | result |

| --------- | ---------- | ------ |

| 1 | 2022-01-17 | Win |

| 1 | 2022-01-18 | Win |

| 1 | 2022-01-25 | Win |

| 1 | 2022-01-31 | Draw |

| 1 | 2022-02-08 | Win |

| 2 | 2022-02-06 | Lose |

| 2 | 2022-02-08 | Lose |

| 3 | 2022-03-30 | Win |

"""

df = md2sql(sql_text, type_md, "Matches", db_config)

print(df)

选手的 连胜数 是指连续获胜的次数且没有被平局或输球中断。

写一个SQL 语句来计算每个参赛选手最多的连胜数。

本题本质上是求每个选手最大的连续为Win的次数。

如果需要求分组内连续性可以使用如下代码

select

player_id,result,

row_number() over(partition by player_id order by match_day) -

row_number() over(partition by player_id,result order by match_day) g

from matches;

player_id result g

--------- ------ --------

1 Win 0

1 Win 0

1 Win 0

1 Win 1

1 Draw 3

2 Lose 0

2 Lose 0

3 Win 0

但是本题只需要求每个选手的连续win

select

player_id,result,

sum(result!="Win") over(partition by player_id order by match_day) g

from matches;

player_id result g

--------- ------ --------

1 Win 0

1 Win 0

1 Win 0

1 Draw 1

1 Win 1

2 Lose 1

2 Lose 2

3 Win 0

然后求得每个选手的连胜数

select

player_id,sum(result="Win") cnt

from(

select

player_id,result,

sum(result!="Win") over(partition by player_id order by match_day) g

from matches

) a

group by player_id,g;

player_id cnt

--------- --------

1 3

1 1

2 0

2 0

3 1

最终求得每个用户的最大连胜数

select

player_id,max(cnt) longest_streak

from(

select

player_id,sum(result="Win") cnt

from(

select

player_id,result,

sum(result!="Win") over(partition by player_id order by match_day) g

from matches

) a

group by player_id,g

) b

group by 1;

player_id longest_streak

--------- ----------------

1 3

2 0

3 1

数字是否连续递增

数据

type_md = """

| id | int |

| visit_date | date |

| people | int |

"""

sql_text = """

+------+------------+-----------+

| id | visit_date | people |

+------+------------+-----------+

| 1 | 2017-01-01 | 10 |

| 2 | 2017-01-02 | 109 |

| 3 | 2017-01-03 | 150 |

| 4 | 2017-01-04 | 99 |

| 5 | 2017-01-05 | 145 |

| 6 | 2017-01-06 | 1455 |

| 7 | 2017-01-07 | 199 |

| 8 | 2017-01-09 | 188 |

+------+------------+-----------+

"""

df = md2sql(sql_text, type_md, "Stadium", db_config)

print(df)

要求找出人数大于等于100并且id连续3行以上的记录。

我们可以在过滤后给每行一个连续性判断的标记

select

id,visit_date,people,

id-rank() over(order by id) g

from stadium

where people>=100;

id visit_date people g

------ ---------- ------ --------

2 2017-01-02 109 1

3 2017-01-03 150 1

5 2017-01-05 145 2

6 2017-01-06 1455 2

7 2017-01-07 199 2

8 2017-01-09 188 2

可以看到连续的id都被标记了相同组号接下来我们继续找到拥有三条记录以上的组

select

id,visit_date,people

from(

select

id,visit_date,people,

count(1) over(partition by g) n

from(

select

id,visit_date,people,

id-rank() over(order by id) g

from stadium

where people>=100

) a

) b

where n>=3;

结果

id visit_date people

------ ---------- --------

5 2017-01-05 145

6 2017-01-06 1455

7 2017-01-07 199

8 2017-01-09 188

数字连续递增区间

数据

type_md = """

| log_id | int |

"""

sql_text = """

| log_id |

| ------ |

| 1 |

| 2 |

| 3 |

| 7 |

| 8 |

| 10 |

"""

df = md2sql(sql_text, type_md, "Logs", db_config)

print(df)

查询得到 Logs 表中的连续区间的开始数字和结束数字结果按照 start_id 排序。

select

min(log_id) start_id,max(log_id) end_id

from(

select

log_id,

log_id-rank() over(order by log_id) g

from logs

) a

group by g;

start_id end_id

-------- --------

1 3

7 8

10 10

日期是否连续按年递增

这本质上依然是一个数字递增的问题因为日期取年份是数字。

数据

type_md = """

| order_id | int |

| product_id | int |

| quantity | int |

| purchase_date | date |

"""

sql_text = """

| order_id | product_id | quantity | purchase_date |

| -------- | ---------- | -------- | ------------- |

| 1 | 1 | 7 | 2020-03-16 |

| 2 | 1 | 4 | 2020-12-02 |

| 3 | 1 | 7 | 2020-05-10 |

| 4 | 1 | 6 | 2021-12-23 |

| 5 | 1 | 5 | 2021-05-21 |

| 6 | 1 | 6 | 2021-10-11 |

| 7 | 2 | 6 | 2022-10-11 |

"""

df = md2sql(sql_text, type_md, "Orders", db_config)

print(df)

order_id 是该表的主键。每一行都包含订单 ID、购买的产品 ID、数量和购买日期。

查询连续两年订购三次或三次以上的所有产品的 id。

首先筛选某年订购三次以上的产品并进行连续年份标记

select

product_id,

year(purchase_date),

year(purchase_date)-rank() over(partition by product_id order by year(purchase_date)) g

from orders

group by product_id,year(purchase_date)

having count(1)>=3;

product_id year(purchase_date) g

---------- ------------------- --------

1 2020 2019

1 2021 2019

最后判断是否能够连续2年以上

select

distinct product_id

from (

select

product_id,

year(purchase_date)-rank() over(partition by product_id order by year(purchase_date)) g

from orders

group by product_id,year(purchase_date)

having count(1)>=3

) a

group by product_id,g

having count(1)>1;

product_id

------------

1

日期是否连续按天递增

数据

type_md = """

| fail_date | date |

"""

sql_text = """

| fail_date |

+-------------------+

| 2018-12-28 |

| 2018-12-29 |

| 2019-01-04 |