flume kafka channel 应用详解

| 阿里云国内75折 回扣 微信号:monov8 |

| 阿里云国际,腾讯云国际,低至75折。AWS 93折 免费开户实名账号 代冲值 优惠多多 微信号:monov8 飞机:@monov6 |

1 官方文档

Documentation -> Flume User Guide

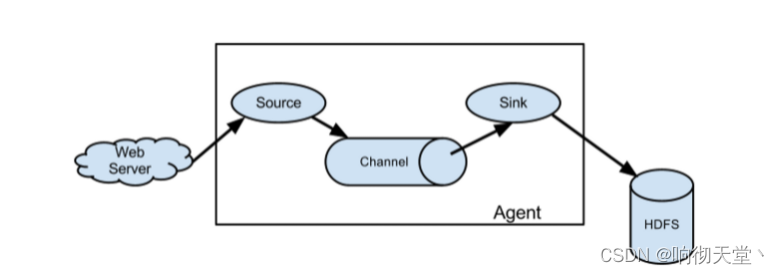

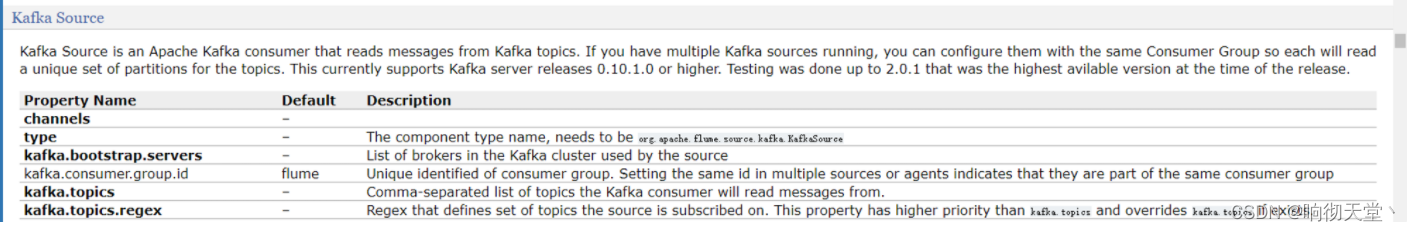

2 kafka source (消费者)

Kafka Source is an Apache Kafka consumer that reads messages from Kafka topics. If you have multiple Kafka sources running, you can configure them with the same Consumer Group so each will read a unique set of partitions for the topics. This currently supports Kafka server releases 0.10.1.0 or higher. Testing was done up to 2.0.1 that was the highest avilable version at the time of the release.

Kafka Source 是一个 Apache Kafka 消费者它从 Kafka 主题中读取消息。 如果您有多个 Kafka 源正在运行您可以使用相同的消费者组配置它们这样每个源都将读取一组唯一的主题分区。 目前支持 Kafka 服务器版本 0.10.1.0 或更高版本。 测试已完成至 2.0.1这是发布时的最高可用版本。

3 kafka sink (生产者)

This is a Flume Sink implementation that can publish data to a Kafka topic. One of the objective is to integrate Flume with Kafka so that pull based processing systems can process the data coming through various Flume sources.

This currently supports Kafka server releases 0.10.1.0 or higher. Testing was done up to 2.0.1 that was the highest avilable version at the time of the release.

Required properties are marked in bold font.

这是一个可以将数据发布到 Kafka 主题的 Flume Sink 实现。 目标之一是将 Flume 与 Kafka 集成以便基于拉取的处理系统可以处理来自各种 Flume 源的数据。

目前支持 Kafka 服务器版本 0.10.1.0 或更高版本。 测试已完成至 2.0.1这是发布时的最高可用版本。

必需的属性以粗体标记。

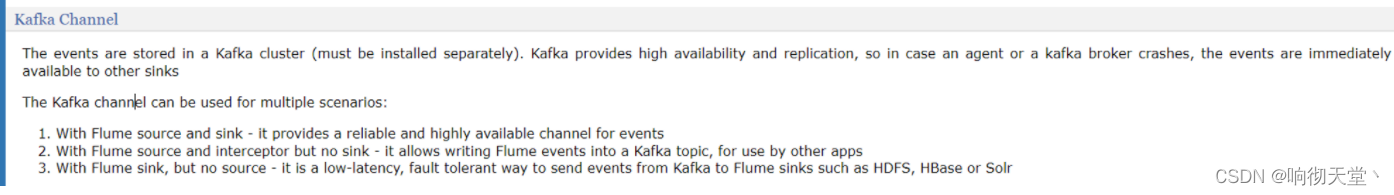

4 Kafka Channel

4.1 第一种使用

With Flume source and sink - it provides a reliable and highly available channel for events

4.2 第二种使用

With Flume source and interceptor but no sink - it allows writing Flu me events into a Kafka topic, for use by other apps

4.3 第三种使用

With Flume sink, but no source - it is a low-latency, fault tolerant way to send events from Kafka to Flume sinks such as HDFS, HBase or Solr

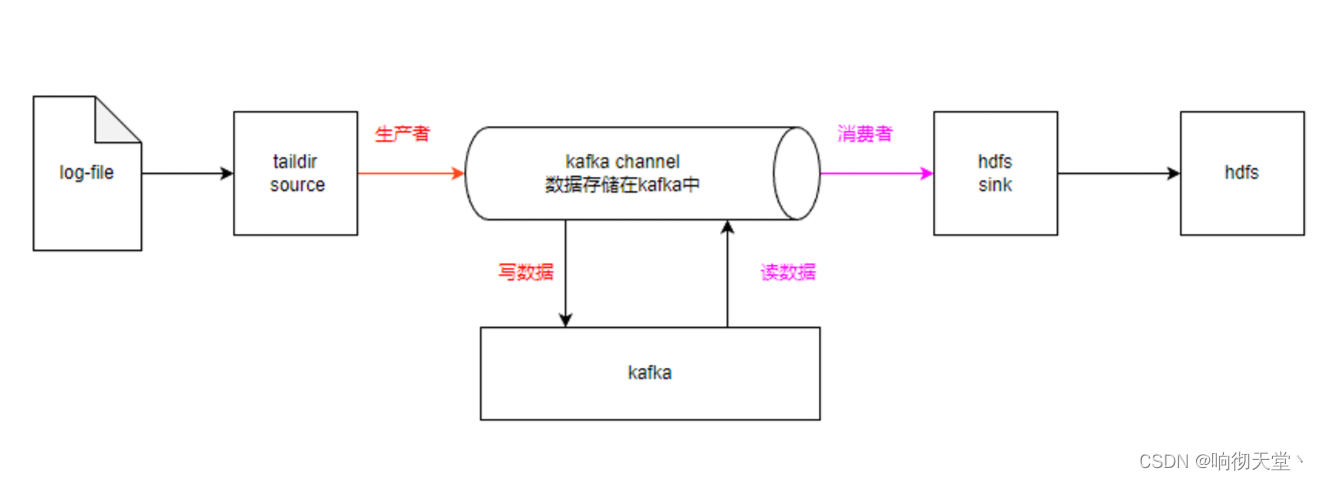

5 实操

5.1 flume读取数据写入kafka

创建配置文件

vim file_to_kafka.conf

#定义

a1.sources = r1

a1.channels = c1

#配置sources

a1.sources.r1.type = TAILDIR

a1.sources.r1.filegroups = f1

#监控目录

a1.sources.r1.filegroups.f1 = /usr/local/mock-log/logs/app.*

#断点续传位置

a1.sources.r1.positionFile = /usr/local/flume-1.9.0/taildir_position.json

#配置channels

a1.channels.c1.type = org.apache.flume.channel.kafka.KafkaChannel

a1.channels.c1.kafka.bootstrap.servers = hadoop4:9092

a1.channels.c1.kafka.topic = topic_log

#flume event 只传body

a1.channels.c1.parseAsFlumeEvent = false

#组装

a1.sources.r1.channels = c1

启动flume

nohup bin/flume-ng agent -n a1 -c conf/ -f job/file_to_kafka.conf -Dflume.root.logger=info,console >flume.log 2>&1 &

启动kafka

docker exec -it kafka /bin/bash

cd /opt/kafka/bin

./kafka-console-consumer.sh --bootstrap-server localhost:9092 --topic topic_log

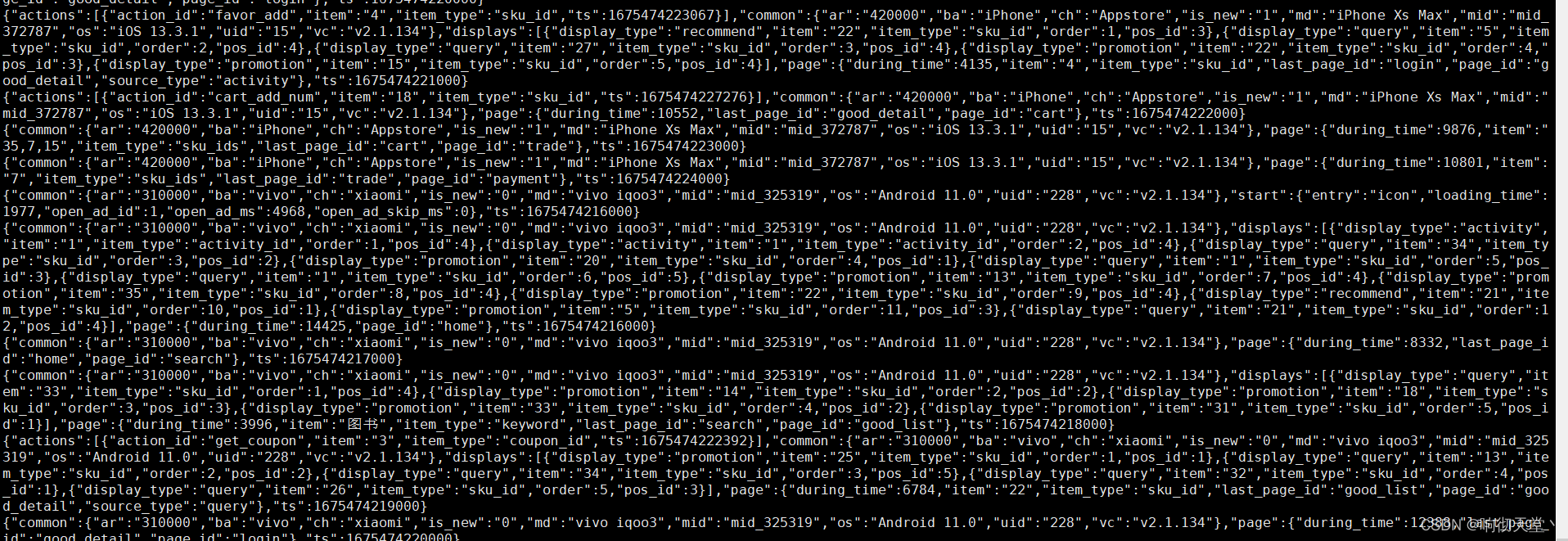

模拟生产日志查看kafka

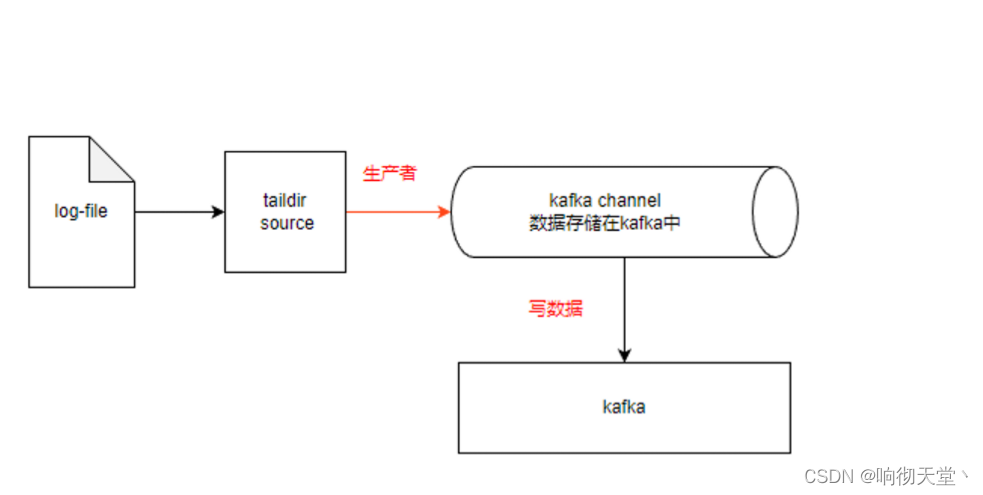

5.2 flume channel 读取 kafa 消息写入hdfs

创建配置文件

#定义组件

a1.channels = c1

a1.sinks = k1

#配置channels

a1.channels.c1.type = org.apache.flume.channel.kafka.KafkaChannel

a1.channels.c1.kafka.bootstrap.servers = hadoop4:9092

a1.channels.c1.topic = topic_log

a1.channels.c1.group.id = flume-consumer

a1.channels.c1.parseAsFlumeEvent=false

#配置sink

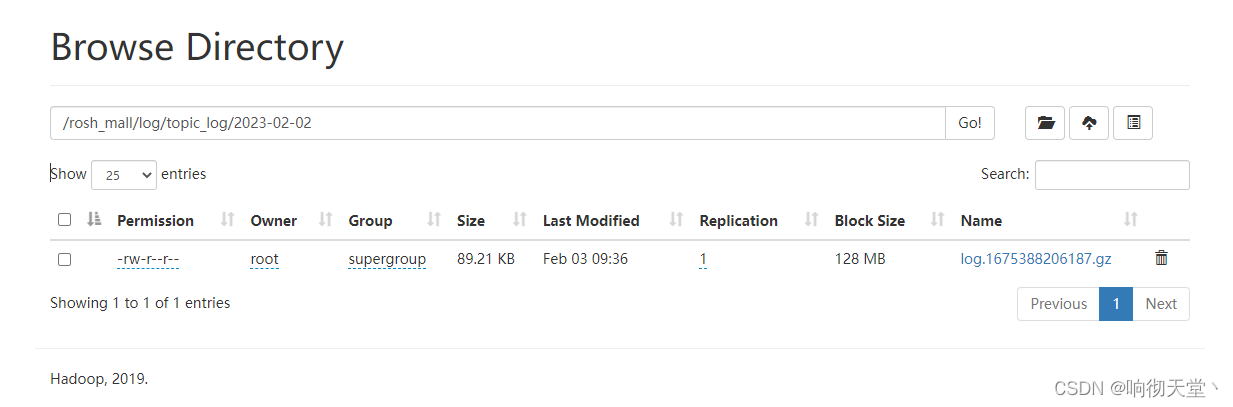

a1.sinks.k1.type = hdfs

a1.sinks.k1.hdfs.path = /rosh_mall/log/topic_log/%Y-%m-%d

#文件前缀

a1.sinks.k1.hdfs.filePrefix = log

a1.sinks.k1.hdfs.useLocalTimeStamp = true

#10秒或128M文件写入hdfs

a1.sinks.k1.hdfs.rollInterval = 10

a1.sinks.k1.hdfs.rollSize = 134217728

a1.sinks.k1.hdfs.rollCount = 0

#控制输出文件类型

a1.sinks.k1.hdfs.fileType = CompressedStream

a1.sinks.k1.hdfs.codeC = gzip

#组装

a1.sinks.k1.channel = c1

启动

#flume 启动agent 名字 a1 配置文件

nohup bin/flume-ng agent -n a1 -c conf/ -f job/kafka_to_hdfs.conf -Dflume.root.logger=info,console >flume.log 2>&1 &

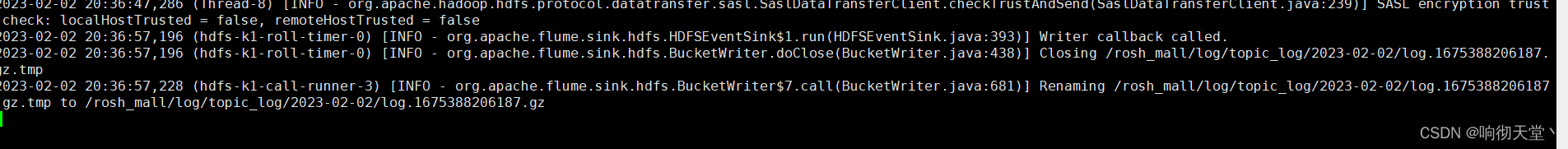

查看日志

tail -f flume.log